History of Computers - Computer History Timeline

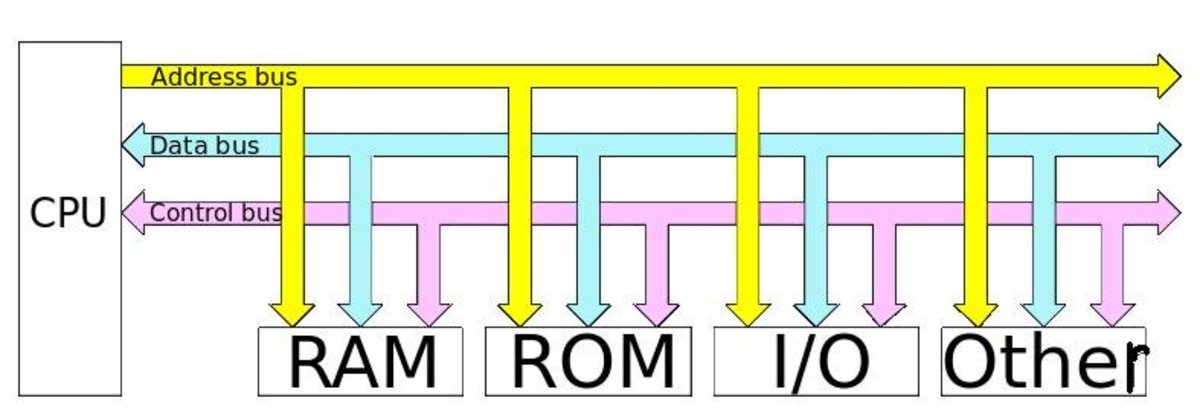

A computer is an electronic machine that accepts massive data, organises it and arrives at solutions as per the programme fed into it, and performs various other functions like word processing etc.

Computers promote efficiency by saving time and labour, by giving accurate solutions, and by quickly handling massive and complicated data that is difficult to handle manually. Computers are being used in defence, railways, airways, industry, research, administration, education, etc.

In the 19th century Charles Babbage, an English scientist and mathematician attempted to mechanise sequence of calculations in a machine, so that it would perform all the necessary operations in a predetermined sequence without the intervention of an operator. The machine designed by Babbage used card-boards and cards with holes punched in them, to introduce both instructions and data into machine. The machine was to perform the instructions dictated by the card holes automatically until an entire sequence of instructions is completed. Babbage succeeded in establishing the basic principles on which the modern computers are constructed.

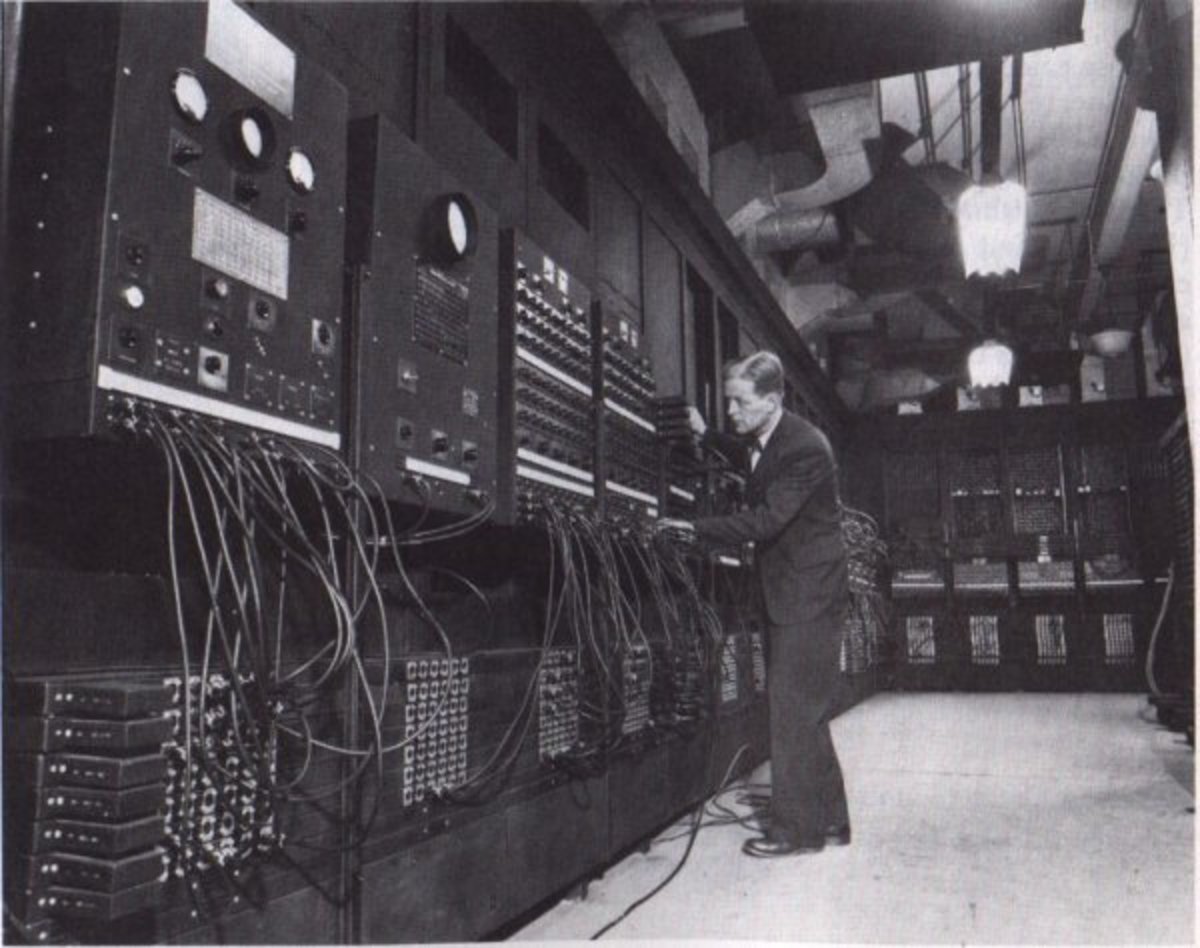

First Generation: In 1937, Howard Aiken at Harvard, proposed to IBM that a machine could be constructed by using some parts and technique from the punch card machinery which could automatically sequence the operations and hence calculations can be performed. This machine when completed used a combination of electro-mechanical devices. The machine was in operation for some time, generating many tables of mathematical functions (particularly Bessel functions) and was used for trajectory calculations in World War II.

Second Generation: The replacement of large expensive (hot) vacuum tubes with small inexpensive, reliable, comparatively low heat dissipating transistors led to the second generation computers. The vacuum tube computers were known as first generation computers whereas the computers using transistors were called second generation computers.

Third Generation: By 1965, third generation computers were introduced. The third generation computers of this period began making heavy use of integrated circuits, in which many transistors and other components were fabricated and packed together in a single small container.

Fourth Generation: The manufacture of integrated circuits has become so advanced so as to incorporate hundreds of thousands of active components in a volume which is a very small fraction of an inch. This new technique was called large scale integration and very large scale integration. This has led to smaller size, lower cost, larger memory and ultra fast processing computers. Present day computers are fourth generation computers.

Fifth Generation: The developments in computer technology which are being used for "Artificial Intelligence" are being termed as fifth generation computers.