tackling a problem

McCall and Kaplan, in Whatever It Takes, say that defining or recognizing problems is an act of creation), and we've made our decision to act. Well, not exactly …

What we need to do is:

1) determine what success looks like (and, by the same token, what failure looks like),

2) give some thought to implementation strategies,

3) thoughtfully consider ethical implications, and

4) act.

Finally, we need to recognize that this is a cyclic process. One action may result in a host of sub-actions, all requiring more or less the same process. So step 4 above (Act) may be preceded by several layers of further decision-making and consideration.

Implementing Your Decision

How hard can it be, right? You've sketched out all the tasks. You've even got them mapped out in Microsoft Project. Now we can sit back and ... what? ... let the solution implement itself? Of course not. A colleague of mine succumbed to that sort of thinking. His problem wasn't that he thought the problem would implement itself. His problem was that he thought his peers and their bosses were as motivated to see the implementation as his boss and he were. He also assumed that those, who were actually on the same page as he was, interpreted the problem and the implementation the same way he did. Hah!

Your actions, your implementation, and the consequences look like different things to different people. The manager's role is one of influence. As the British author E.M. Forster wrote in Howard's End, "Don't ask for power. Seek influence. It lasts longer."

What Does Success (and Failure) Look Like?

A good manager will determine what success looks like before implementing one of his decisions. If he doesn't, then how will we know the implementation completed correctly? I've been in many meetings where users complained that, "the system is too slow." The network folks respond that they <fill in the blank>, and that the network should be okay now. But without some idea of what "slow" is, and without some idea of what the users will be satisfied with, the network folks are on a fool's mission.

(Of course, the other side of the coin was explained by a salesman: "We won't tell <the customer> what benchmark results we're aiming for. We'll run the benchmarks, and then determine what results correspond to a 100% score. Naturally, whatever results our system gets will be the 100% score. It's like shooting an arrow at a blank wall. No matter where it hits, paint a bulls-eye, centered around your arrow!")

For those of us with a more ethical approach we need to find a resource – preferably more than one – to help quantify our work, such as time, finances and/or people

Start With The Problem Definition

Think about defining criteria in several areas. First, look at the problem definition. A good problem definition will have the seeds of some of the criteria. "Sales declined 20% this year; they need to grow at least 10% every year." Such a statement is a good starting point for deciding what success looks like. It might not be as simple as, "A successful implementation will result in sales growing 10%," but that's a reasonable place to start. So think about what you're trying to do, what problem you're trying to solve.

Don't think, though, that you must come up with numbers to describe your outcomes (such as the 10% in the previous paragraph). Do think, though, about being able to measure or clearly describe (quantify) the outcomes. For example, a good criterion must be more precise than, "We want the outcome to be fair to everyone." That's an okay start, but a better criterion might be, "The overtime that results should be equal (plus or minus 5%) for part-time and full-time workers."

Resource Use and Stakeholders

Another good area to consider for criteria is resource-use time, treasure, and talent. Are you trying to make a process more efficient? Do you want a division to spend less money, or make more money? Do you want to use fewer people in a process? Do you want people to feel more comfortable in their jobs? Is there an inequity that needs to be addressed? Measure the change in usage of these resources. Consider all the stakeholder interests. You've identified the stakeholders during the problem definition stage; how will they be affected by 'success'?

It's very important to look at the flip side - what does failure look like, and how will it affect each of the players? You might find that success won't affect some players very much at all, but failure for these same players results in some major problems. It's essential to imagine what might happen as a result of your decision and implementation, both good and bad. And just in case … have a back-out plan if the problem solution fails.

Timeframe and Other Consequences

It is very important to consider time when you decide what success is. For example, what if your solution improves employee morale...but only for a week? When you define success and failure, timeframe is crucial. Too short a timeframe and your solution isn't really a solution; at best, it's a stop-gap measure, a short-term band-aid. Too long a timeframe might mean that you really can't measure the outcome. Or it might make your solution irrelevant if technology (or other considerations) passes you by. It's also very important to realize that you don't work in a vacuum. What if sales go up 30% as a result of your "solution"? Yippee! Until, that is, product returns skyrocket, wiping out the sales gains. Or until the folks in finance complain that they can't get financing for all the new business. Or until ... (and on, and on, and on). Your implementation is not like a baseball bat hitting a baseball and sending it over the fence. Rather, it's more like a cue ball being driven into all the other balls during the break.

Risk Assessment

Part of all this analysis goes into risk assessment - a balancing of success and failure, and a calculation of the possibility of success or failure. It would be nice if our spreadsheets could come up with a single "go/no-go" number that quantified all the risk perfectly. Alas, that's not possible. (Well, it is; and you'll see spreadsheets that purport to give you a single number that is "the" answer. Be very wary of them. In some cases it's possible, but it happens very seldom.) Certain areas lend themselves to such analysis, but managerial decision-making isn't one of those areas. Certainly we should try to quantify risks so that we can get a better feel for what we're facing, but don't ever think that the quantified numbers are ever better than the original assumptions that went into coming up with those numbers. Remember GIGO-garbage in, garbage out.

How Do We Measure Failure?

The answer to this question is intertwined with the answer to the question, "What does success look like?" For example, if you decide that success means that sales have gone up 20%, then measuring success might be as simple as seeing how much sales went up; if more than 20% - success, if less – hmmm ... failure? That depends on what failure is. Certainly if sales drop, it is a failure. If there is no change it is a failure because you just spent a bunch of resources (people, budget, time) to no avail.

Start With The Problem Definition

McCall and Kaplan talk about a few ways of discovering discrepancies, and therein lie the roots of problem definition and outcome measurement. They mention comparing the existing state of affairs to a standard in order to begin to find discrepancies. That standard could be the past, plans and forecasts, or the performance of other, comparable units in your organization or in outside organizations. If you know there's a discrepancy, then you can tell if your solution implementation changes the discrepancy.

Many times these discrepancies - and, consequently, your problem definition and outcome measurement - are ambiguous. The information comes from various sources that are biased, shaded, and so on. If you've handled this ambiguity in your problem definition, you should revisit the reasons for the ambiguity when you decide how you will measure your outcomes.

What, Exactly, Do I Measure?

If your outcome is a 20% sales increase, then that's pretty easy to measure. Suppose the problem you think you have is a morale problem. How are you going to know if your morale problem is solved? Is that something you can measure? While you cannot take the "morale pulse" of your organization directly, you can probably measure other things; those other things are called proxies. That is, they are stand-ins (albeit imperfect ones) for morale. For example, the number of sick days taken by employees might tend to go up in a demoralized organization. Or employee turnover is higher than in other, similar, non-demoralized (happy?) organizations. If you measure employee turnover, you're not measuring morale directly. Instead, employee turnover is a proxy for morale.

If you do measure a secondary effect (as employee turnover might be when considering employee morale), make sure you analyze your results carefully. Suppose when you measure the turnover, you realize that another company that competes with you for the same labor pool has bumped hourly pay by 15%. Some of the measured employee turnover may be due to the pay raise, as opposed to lack of good morale. You won't always be able to account for these distracting factors, but you should try to do so. In any case, you should always document how you went about measuring outcomes.

Let's go back to the 'easy' outcome measurement-sales increase of 20%. That's easy to measure, right? Maybe not. Realize that a manager's job is one of continual complications. If it weren't, then the job would be much easier than it is.) For example, what if the mix of products changes during the time period you're measuring? Won't that have an effect on sales, in addition to the effect your solution implementation has? What if lead times get longer? Or shorter? What if quality suffers? What if your competitor stops competing in the particular product area you're measuring?

Like the secondary (and tertiary) effects, these are things you must somehow account for. There is no Excel spreadsheet formula that says,

=AccountForComplications(A12..T36)

So take your measurements with a grain of salt. And analyze the environment to see if you can find those complicating effects. Don't wait for them to present themselves to you; actively go about finding them. Ask yourself, "What might affect these measurements I'm taking?"

Timeframe and Secondary Effects

Remember we talked earlier about timeframe and secondary effects when considering criteria for success. If you've done so, then those same considerations apply here, when determining how to measure the outcome. For instance, you might be hoping to increase the number of transactions being conducted over a specified amount of time. Or you may be replacing work being done manually, freeing those employees to achieve other projects or promotions.

The Mechanics of Outcome Reporting

The best advice I can give here is to report what you know (and what you wanted to know but still don't) and what you can determine from analysis, and make sure to distinguish the two. That is, the reader should know which parts you've measured, and which parts are a result of your analysis.

Do you need to use a spreadsheet to report results? No. In fact, if you aren't measuring quantities (that is, if your measurements aren't numbers), then using a spreadsheet might be misleading. If you do use a spreadsheet, consider a simple chart or graph to present your results. Don't go overboard; this is one case where less is more. For an excellent education on presenting quantitative information, try to obtain a copy of the following book: Tufte, E. (1983). The Visual Display of Quantitative Information. Cheshire, Connecticut: Graphics Press.

Words are also a wonderful way to report your subjective and objective analyses Sometimes a simple description makes sense, as long as it's complete. Realize that sometimes subjective results can be quantified. Suppose you want to improve the way your customers view you, your product, and your customer support. "How our customers view customer support"-that's a pretty nebulous concept. What if, however, you asked customers after each support call what they thought of the call? You might be able to put a number to their satisfaction (and dissatisfaction!). Doing so isn't perfect. Look at polls (which is essentially what I'm proposing in this example). Many times election polls are nowhere near accurate. The reasons are complicated. However, what we're looking for isn't completely accurate results. We're looking for a feel for what's happening. Developing proper polls is an art in itself. Sometimes it’s just as easy to determine customer satisfaction by counting a drop in product returns or a growth in returning customers.

Sets and Venn Diagrams

Using Venn diagrams to separate information into sets is often a good tool for visualizing a problem. Sets are easy to define, yet can get complicated in a hurry. A set is simply a collection of objects. The objects may be numbers, letters, documents, database records, or elephants. Database programs use sets extensively. Think of a database record that describes you. The record contains your name, date of birth, and phone number. In fact, let's pretend that the database contains one such record for each member in a class.

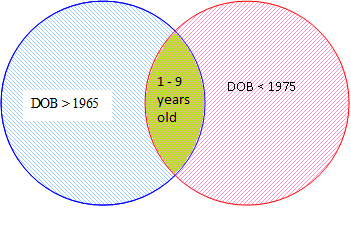

We could define two sets of records. The first set would contain those records for class members born before 1975. The second set would contain those records for class members born after 1965. Some of us would be in the first set, others would be in the second set, and some of us would be in both sets.

Sets, combined with Boolean Logic, are extremely powerful. One could ask the database program to create a new set that contained those records that matched both statements:

Year Of Birth < 1975 AND Year Of Birth > 1965

Structured Query Language (SQL) is based on statements that have a passing resemblance to the statement above. SQL, and database products based on SQL, are the basis for much of the power we get from computers today.

John Venn, a 19th century English mathematician, created a way of diagramming Boole's logic symbols. It was later improved by Charles Dodgson (yes, another English mathematician; but he is better known as Lewis Carroll), and we now know them as Venn diagrams.

Venn diagrams are excellent for showing graphically the logical relationships between sets of objects. In particular, Venn diagrams help us understand the intersection and union of sets. Remember the database query we ran? Here it is again:

Year Of Birth < 1975 AND Year Of Birth > 1965 (C)

Remember that the first set contains records of class members born before 1975 (A); the second set contains records of class members born after 1965 (B). The statement above describes the intersection of the two sets. That is, it describes those records that are in both sets.

In the Venn diagram at the top of this article, the circle on the left represents the first set of records. The circle on the right represents the second set of records. The area where the two circles overlap, represents those records that are in both sets (that is, the records for those born between the years 1965 and 1975). The “C” area is the intersection of the two sets.

Venn diagrams are indispensable for showing us the logical relationships among sets. They are often used in Object Oriented Programming to discern modules for a CASE library, or in architectural design (of network access, a database, an application to be developed). Even in personal problem-solving, a Venn diagram can help a person portray the known parts of a problem and how they interact with each other.

Facing a problem, be it in business, technology, mechanics, or a personal situation, does not appear as daunting or even hopeless if you can properly identify the problem, weed out the inconsequential data, make room for incidental effects and have a way to measure the outcome. You then will see how to ACT to resolve the problem.