Artificial Intelligence- Robo Scientist

Robots Can "Reason"

Artificial Intelligence is the New Frontier

Artificial Intelligence is the new frontier. It is no longer a question if a robot scientist can actually perform as a human scientist. A robot can now be controlled by human thought alone as reported in “Scientific American.” Scientists at Aberystwyth University in Wales and the U.K's University of Cambridge designed Adam to take a more human approach to scientific inquiry in a process that does not need human inputs.

Using Artificial Intelligence

By reasoning and robotic hardware, Adam, a robot scientist discovered three genes that encode specific yeast enzymes, a determination human scientist had not been able to make.

Automated Discovery Process

It is now possible to automate scientific discovery. This does not mean automating experiments. It is possible to build a machine—a robot scientist—that can discover new scientific knowledge.

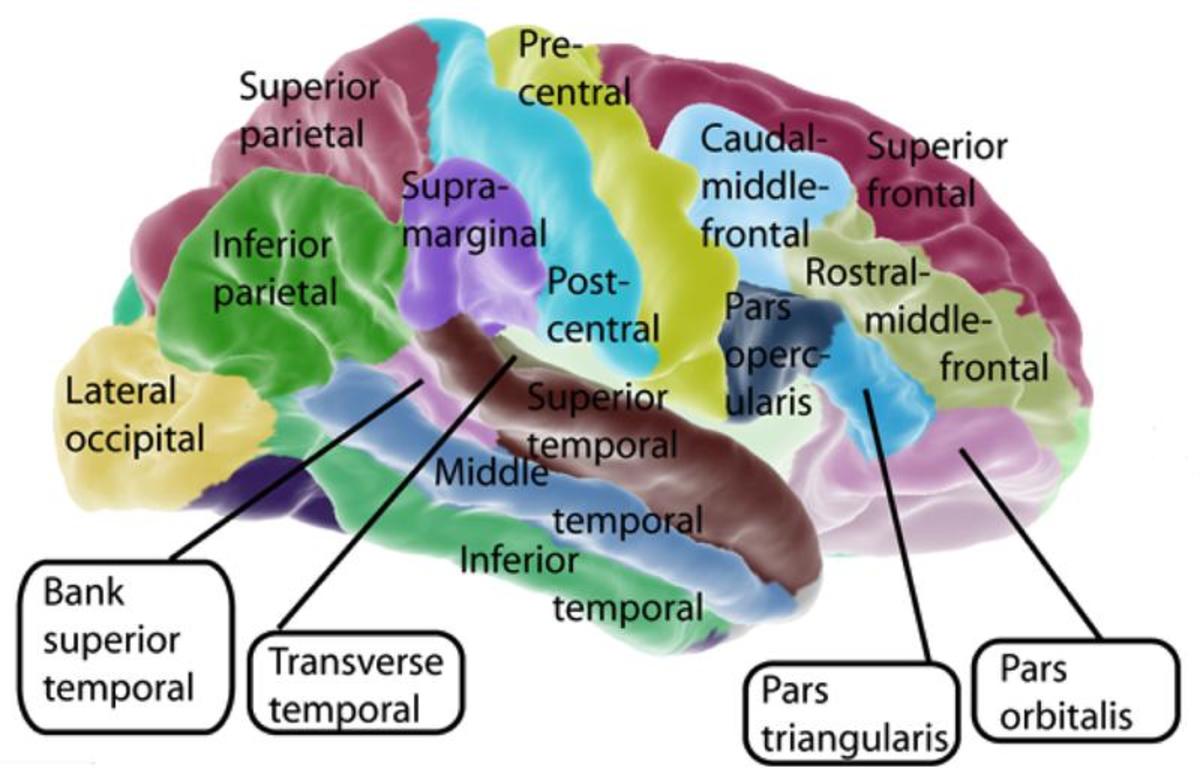

The journal Science, noted the computer robot named Adam autonomously hypothesized (forms a “guess”) that certain genes in the yeast Saccharomyces cerevisiae code for enzymes that catalyze some of the microorganism's biochemical reactions. This is the first step in the scientific problem-solving process.

Adam is not a humanoid. It is a complex automated computer lab. Adam after developing a hypothesis, it develops its own experiments, noting the results. It can initiate about 1,000 strain-media experiments a day without human input.

Ross King, a professor of computer science who led the research at Aberystwyth University, stated, "This is one of the first systems to get (artificial intelligence) to try and control laboratory automation.”

Adam has been programmed with extensive background knowledge. The claim that Adam holds background “knowledge,” rather than information triggers a philosophical debate. Adam uses “knowledge” to reason and guide its interactions with the physical world.

Scientist follows the scientific method by forming hypotheses and then experimentally test the deductive consequences of those hypotheses.

Adam has been programed to follow the “Occam’s razor” – that all else being equal, a simpler hypothesis is more probable than a complex one.

The Future is Here

An example is the Global Hawk that is a robotic plane which can fly autonomously can fly at altitudes above 60,000 feet (18.3 kilometers) — roughly twice as high as a commercial airliner — and as far as 11,000 nautical miles (20,000 kilometers) — half the circumference of Earth.

Already the US government has invested billions to automate warfare: artificial intelligence that can weigh the options and implement self-determined actions. The US Army and Navy have both hired experts in the ethics of building machines to prevent the creation of an amoral Terminator-style killing machine that murders indiscriminately. The concern is whose “morals” will be used? Whose “values” are to be implemented?

Colin Allen, a scientific philosopher at Indiana University's has just published a book summarizing his views entitled Moral Machines: Teaching Robots Right from Wrong.

Whose is to Decide What is Right and Wrong?

Ronald Arkin, a computer scientist at Georgia Tech university, who is working on software for the US Army has written a report which concludes robots, while not "perfectly ethical in the battlefield" can "perform more ethically than human soldiers."

Researchers are now working on "soldier bots" which would be able to identify targets, weapons and distinguish between enemy forces like tanks or armed men and soft targets like ambulances or civilians.

Noel Sharkey, a computer scientist at Sheffield University says, “"It sends a cold shiver down my spine. I have worked in artificial intelligence for decades, and the idea of a robot making decisions about human termination is terrifying."

Related Article:

http://hubpages.com/hub/Artificial-Intelligence-Revolution-Smarter-Than-Humans