Do you want a self-driving car that prioritises the lives of white people over black people?

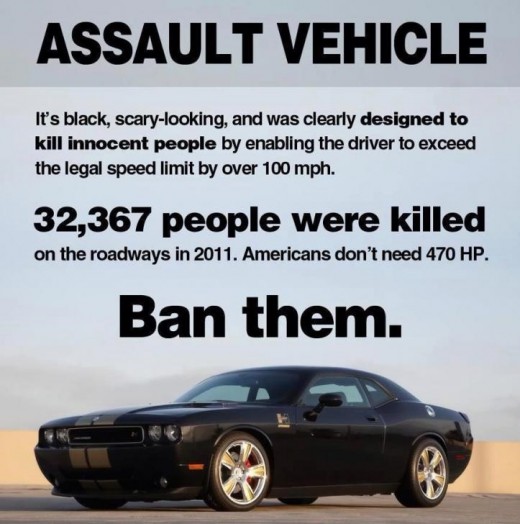

When you get behind the wheel of your Toyota Camry and rush off to work every morning, you’re probably taking the biggest risk you'll take all day. Car accidents remain one of the leading causes of injury and death in North America. But we never think of the vehicles themselves as responsible for this fact, because that would be absurd. It’s not like anybody has ever seriously put forward the notion that we should ban all vehicles.

But what if it were possible for a car to make moral decisions? What would those decisions be? And what would it actually mean when you say that the car itself is making the decisions? Hopefully we are all aware that self-driving vehicles are currently being designed and tested by Google and Tesla, to name just a couple, and that most of the technology has actually been around since the 80's. So all of these questions and ideas are being considered already. Lets cover just the 3 questions above and then open for a discussion in the comments.

What decisions does an automated vehicle have to make?

While following the most appropriate navigation path to safely arrive at your destination, your self-driving car will need to maneuver traffic and obstacles as well as follow signage and be aware of crosswalks and pedestrians and cyclists and the rest at all times. The operating system will follow a prioritised algorithmic response sequence in the event of a potential accident. At each stage of the potential accident, the operating system will decide the best (and least harmful) course of action to take and then take it, with no time for human input -- that's how we're going to hopefully avoid most accidents from happening at all.

So many of those decisions might include:

- Abruptly reducing speed to avoid a rear end collision, but also monitoring the vehicles behind to avoid stopping too quickly.

- Swerving (left or right?) when slowing or stopping in time is deemed impossible. Or swerving to avoid a pedestrian who has either jumped, fallen, or been thrown in front of the vehicle.

- Swerving left into possible oncoming traffic and hoping another smart car reacts to avoid you might be better than swerving right into a crowd of pedestrians, or rear-ending a family with 3 children in the car, or running into a baby carriage that has rolled into the street.

These are just a few examples of situations in which a self-driving vehicle will have an important decision to make in a split-second based on dynamic information; they will follow the algorithm as written by us -- so we need to make these decisions now for every scenario imaginable and then write them into the code.

So what does it mean to say that vehicles have decisions to make?

Well there are still more decisions that we haven't discussed: moral decisions. If there is no course of action that entirely avoids harm or tragedy, a morally relative decision needs to be made. It is better to hit the rear-end of an SUV than a person; it is better to run over 1 person than 3; it is better to run over an adult who might survive than a young child who will certainly be killed.

If all else is equal, and there is no other way out, and a vehicle has to decide between running onto a sidewalk and hitting a black person or a white person, who does it choose and how? I'll make it easier.

Lets say two girls (one white and one black) are riding down the street on their bikes and you are coming up behind them. A car with an entire family in it is coming towards you in the opposite lane, and you have your family in your vehicle. The white girl falls off her bike and rolls right into your immediate path.

Do you:

It's a pretty terrible thought that none of us want to hold onto for too long, but it's unfortunately true that somebody somewhere needs to write this kind of stuff into not just our self-driving vehicles, but all future AI and automated systems that interact with the public. We have to decide, it's that simple.

© 2016 Ghaaz B