Edcuation Discussion: The History and Evolution of Standardized Testing

An Overview:

When the subject of education comes up in a conversation, perhaps the mind will wander to the thought of those familiar classrooms or the classmates. Perhaps the smell of chalk (or whiteboard marker) is still easy to recall. But inevitably, if one is to dwell for any amount of time on the subject, their mind will surely find its way to the subject of standardized testing. After all, the SAT is required at nearly every american college for admissions purposes. However, with every subject, there are two sides to the same coin and, of course, standardized testing is no different. To understand it in the big picture, one must first understand the history of standardized testing, including its invention, the evolution of said testing, mostly focusing on the Educational Testing Service (hereafter abbreviated ETS), and how it has been viewed in the past. While it is important to see what effects the implementation of standardized testing has had on the way children are educated today, it is most certainly not wise to ignore under what purpose such tests were designed to function and in what ways they are being used in relativity to that intended purpose.

History

Beginning from a historical perspective, the idea of using the results of an examination for purposes of determining the ability to perform to a certain capacity has been around since 2200 B.C. in ancient China. The emperor would give the government officials an examination once every three years to determine whether they were fit to maintain their office. In the instance that an individual was not promoted after three examinations, nine years, they were relieved of their duties. While this practice was banned in 1905, A.D., it was around long enough to pass to various areas of England where the practice was continued. (Waldrop, 5-9)

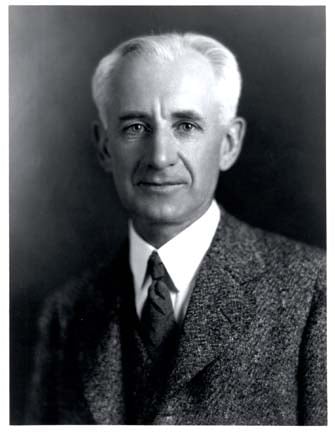

The forefather of the standardized tests, if one must be named, would most definitely be Alfred Binet. Binet was, in many respects, an interesting fellow who not only believed that he could devise a method by which to measure the intelligence of children, but, according to his own studies, succeeded. This would, later, come to be known as the first form of the IQ Test we know today. The individual’s IQ would be calculated in a similar fashion to the way a person’s “Brain Age” is calculated in Nintendo’s Brain Age (and the sequel, Brain age 2). Like Brain Age, the IQ Test would report an age number which is either less than, equal to, or higher than your actual age. The best possible score is twenty, with any number higher than your actual age reflecting negatively. Today, the IQ Test is not scored in the same fashion, but in the early 1900s, this was what was available. It is certainly worth noting that Alfred Binet did not believe that his test was sufficient for measuring the level of intelligence in normal children; what students he would qualify as normal is unclear. However, Binet could foresee great opportunity for abuse of the test. Unfortunately, Binet didn’t have to wait very long before his foresight was confirmed. A man named Henry Goddard, an American psychologist, wrote, in 1994, “It is perfectly clear that no feeble-minded person should ever e allowed to marry or become a parent” (Owen 179). According to Owen, “Goddard advocated using intelligence tests to indentify people unsuited for human propagation. In a speech to Princeton University in 1919, Goddard stated that “The fact is, that workman may have a 10 year intelligence while you have a 20. To demand for him such a home as you enjoy is as absurd as it would be to insist that every laborer should receive a graduate fellowship. How can there be such a thing as social equality with this wide range of mental capacity?”” (Owen 180).

Hoping to take over the scene in 1916 with a revolution, almost quite literally, a man named Lewis M. Terman wrote the following:

“It is safe to predict, that in the near future intelligence tests will bring tens of thousands of …high-grade defectives under the surveillance and protection of society….The time is probably not far distant when intelligence tests will become a recognized and widely used instrument for determining vocational fitness….When thousands of children who have been tested by the Binet scale have been followed out into the industrial world, and their success in various occupations noted, we shall know fairly definitely…the minimum ‘intelligence quotient’ necessary for success in each leading occupation.” (Owen 180).

Oddly enough, it was World War I which really drove the idea of intelligence testing home. However, it was neither Goddard nor Terman that would introduce it to the scene, but, rather, a man named Robert M. Yerkes, a professor at Harvard University who came up with something called the “Army Mental Tests”. These tests were designed specifically for the purpose of measuring the intelligence of the takers. Unfortunately for many, these tests were terribly flawed. My favorite example of a flawed question from Alpha Test 8 reads as follows: “2. Five hundred is played with: Rackets|Pins|Cards|Dice”. (Owen 181) The answer just happens to be cards, but the question just happens to be completely irrelevant to the question of intelligence. It matters not, in this situation, how intelligent one is, rather, it matters much how familiar one is with card games. Of course, it is well worth noting that ‘Alpha Tests’ are those for the individuals who were able to read and speak English. Beta tests, those given to individuals who were either unable to read, unable to write, or both, were absolutely unprofessional and obnoxious. They involved two pantomiming individuals who pretended to be rather mentally deficient during the process of attempting to relay the instructions to the individuals. (Owen 182)

Unfortunately, standardized testing was used in this fashion to demonstrate, rather, prove (to certain individuals of a most racist persuasion), that races which were non-white were of less-than-average intelligence and, thus, polluting the gene pool. After all, they had the numbers to prove it, once one disregarded the fact that nearly every other question on the alpha test, ranging from asking what Crisco is to asking the city in which Pierce Arrow car was made, had nothing to do with intelligence, but, rather, had much to do with whether the individual being tested was completely integrated into white upper-middle-class society. Almost needless to say Yerkes had no problem disregarding certain facts. After the war, a man named Carl Campbell Brigham, a student at Princeton, brought the Alpha Tests to undergraduates at Princeton University. The results prompted him to devise a more challenging version, a challenge which he was more than happy to accept. That more difficult version was nothing less, or more, for that matter, than the Standardized Aptitude Test, with which we are all familiar.

In 1922, a man named Samuel S. Brooks, The District Superintendent in New Hampshire, wrote a book titled Improving Schools. If Owen’s None of the Above bashed standardized testing with a fiery passion, Improving Schools got on its knees and worshipped the concept. Brooks advocated the use of sixteen standardized tests to test a student’s aptitude in areas of English, Mathematics, History and Geography. Seven tests were regarding English, seven tested mathematical ability, and there was one test each to cover geography and history. Each test was graded on an eight-point scale, with eight being the most proficient grade; (Tests were not yet formulated for students above grade eight). Each test had a standard associated with an individual grade level. For example, a fourth-grader is expected to have a fourth-grade level in all of the tests – thus leading to a straight line when graphed. If a fourth-grader were able to attain a score of five in all, or most, test areas, Brooks would recommend that that student be moved into fifth-grade classes. Adversely, students scoring below their expected grade level would be held back. While this may sound like a wonderful idea at first hearing, the reader should note that it does not take into account the event of a student’s ability to understand the material coupled with an inability to perform well on a standardized test. It also neglects the possibility of flawed questions on the standardized tests, which we’ve already determined are not completely absent. (Booker, Chapters 3-6)

In a few short years, the concept of a standardized test which was only accurate in measuring the intelligence of those children on the fringe of retardation or genius, and even that fact remains untested, moved to a test which measurements would ultimately decide the salary and rating of teachers. This is precisely what Booker advocates in his book (Booker, Chapter 7). Booker managed to persuade the school boards to increase the pay rate of certain teachers by two dollars per week. A two-dollar-per-week pay-raise in 1922 is the equivalent of a $24.55-dollar per-week pay-raise today, or, if you prefer, that’s an extra $6.11 per day (Friedman). Booker also hoped to encourage teachers to raise their students’ IQ scores by stating that “they will receive bonuses at the end of the year of five dollars for every whole unit that they increase their [student’s scores] over those of last year, the bonus not to exceed fifty dollars.” (Booker, 81). If it was for this purpose that the tests were designed, it would be no problem at all, but they were designed for the explicit measurement of measuring the intelligence of children possessing abnormal intelligence for their age.

“At a large number of institutions, requiring SAT scores for admissions doesn’t make any sense at all. It is crazy. But they do, most of them. Some institutions will say, ell, we use the scores for non-admissions purposes; we use them for guidance, or for placement. Now, the SAT wasn’t designed for guidance or Placement. Other measures would be more appropriate for those purposes. But they don’t do it anyway. Even when they say they do, they don’t use the scores for guidance and placement" (Owen 231).

Evolution

The history of standardized testing in general, including the history of the SAT, is inextricably linked to the way the Educational Testing Service has more or less evolved the test, and evolved with the test, to bring students what they experience today. ETS, founded in 1947 is, according to its website, “a nonprofit, non-stock corporation organized under the education laws of the State of New York. [Their] work is supported through revenue from our products and services, as well as contracts and grants with government agencies, private foundations, universities and corporations. [Their] mission is to help advance quality and equity in education by providing fair and valid assessments, research and related services.” (About ETS). From what can be gathered about them, they’d rather be neither seen nor heard unless they’re on the receiving end of a check, in which case they’re all-ears in plain view. This is the corporation that brings us the LSATs, required to be able to practice law; the PRAXIS series, required to become a teacher in many states, including the state of Pennsylvania); and even once offered a test which, upon passing, the individual would be qualified for the position of ‘professional golfer’. However, up-and-coming high-school graduates are bound to become well acquainted with the ETS in one way or another, as they are the creators of the Standardized Aptitude Test, or SAT, which were required in ninety-two percent of American colleges in March of 1980 (Owen, 230). In fact, eighty-eight percent of colleges which accept at least ninety percent of their applicants still require the SAT. If a particular college selects one hundred percent of their prospective students to become actual students, have they really selected? Indeed, for those colleges for which above ninety percent of their applicants are accepted, can we even be sure that they are using the SAT scores to facilitate that decision? If even one other decision is used to facilitate the purpose of choosing which ninety percent of the applicants are accepted, is that a worthy use for the SAT scores? Rodney T. Hartnett wrote, in the March 1980 Bulletin of the American Association for Higher Learning, the following description of such admissions decisions as have heretofore been described.

As if the nebulous aspect of what the SAT is actually used for is not bad enough, just what it is that the test itself measures is up for debate. According to Owen, the test measures “primarily the ability to take E.T.S. tests.” (Owen, 133). ETS would say that it measures the student’s aptitude for succeeding in College. Of course, ETS would also say, in its 1982 publication of Taking the SAT, that “Your high school record is probably the best evidence of your preparation for college” (Owen, 204). Given this readily available information, a student preparing for the exam could easily ask: “Why, then, am I taking the SAT to determine my aptitude for a college career if, A. Many colleges which require it do not use it in any true sense of the word ‘selectivity’; B. One can obtain a much better sense of my aptitude for any particular college by looking at my previous experience in high school, and C. Most importantly, perhaps, why am I required to pay $45 to take a test which is both utterly superfluous when it comes to what it measures and ridiculously useless for admissions purposes in at least eighty-eight percent of all instances, barring if I were to apply to a foreign college.” This would be a very observant student.

Worse than the fact that the test cannot even measure what it purports to measure, it asks questions which are entirely non-related to the matter at hand: College Aptitude. Of course the aforementioned statement probably clears up a little bit of why it is a terrible predictor of college aptitude. If a quiz asks impertinent questions, the test-taker will respond with impertinent responses. In The Looking-Glass World of Testing, by Edwin F. Taylor, a simpler version of the SAT, but still a standardized test, once used to test a third-grader’s ability to understand that which a third-grader ought to understand probably tests closer to something like “As a test-maker, what am I thinking?” Taylor uses many prime examples of flawed questions in standardized testing and compares them most eloquently to Lewis Carroll’s Through the Looking-Glass. The first question discussed, reproduced below, is a classic example of a flawed standardized-test question.

“Scientists study three basic kinds of

things – animals, vegetables, and

A. People, B. Stars, C.

Minerals, D. Foods, E. Religions (Standardized Testing

Issues, 11)

Of course, in this instance one might readily supply what happens to be the ‘correct’ answer, letter C. Minerals. Of course anyone who knows even casually what scientists study knows that scientists study people, stars, minerals, foods and religions. While this may be a case of the test-maker overlooking the obvious fact that scientists study all sorts of things when he really wanted to ask “In the game of twenty-questions, the beginning question is usually ‘animal’, ‘vegetable’ and what?”, it seems to be that this is the sort of thing that test-makers on a grand scale are accustomed to overlooking. In 1960, the following question appeared in the SAT.

“The

burning of gasoline in an automobile cylinder involves all of the following except”

A)Reduction, B)Decomposition, C)An exothermic reaction, D)Oxidation,

E)Conversion of matter to energy.

(Owen,

48)

In this case, the question presumes that exactly four out of the five instances mentioned take place in the event of gasoline burning in an automobile cylinder. Quite simply, though, it is plain to see that both reduction and decomposition obviously take place. A student who knows that an exothermic reaction is any chemical reaction that releases heat understands that heat is certainly being released. And, as a matter of course, to say that something is oxidizing is just a fancy way of saying that something is ‘burning’ or ‘exploding', which would leave E as the correct answer. However, while E happens to be the answer that ETS believed to be correct, it is certainly far from accurate. Owen argues that “[If the student is] unfortunate enough to understand, even if only in an elementary way what [e = mc2] is about [he] finds himself at a distinct disadvantage” (Owen, 49). Of course, it could simply be argued that if the individual merely understands, (no equation necessary), that matter can neither be created nor destroyed, he realizes that E must also, sadly, be an incorrect answer. With these kinds of questions on the SAT, what’s the point of studying?

Taylor introduces a new flavor of flawed standardized test questions; the logic-error. “If ½ of 6 is 3, then ¼ of 8 is ____.” (Standardized Testing issues, 11). The possible answers would, of course be shown as a multiple choice question. The answer just so happens to be 2. But, then again, the answer would be two whether or not half of six is three. Now, what the question wanted to ask was “what is ¼ of 8?” Instead, the test-writer resorted to using a variety of question popular in other standardized tests, (like the SAT), and, without a proper understanding of how an analogy is supposed to work, threw together a math problem. Of course, a third-grader probably doesn’t know very much about how an analogy works anyway, but one would certainly expect an eleventh-grader to know. Owen’s example of a flawed SAT analogy follows: “42. MAGNET:IRON:: (A)Tank:Fluid (B)Hook:Net (C)Sunlight:Plant (D)Spray:Tree (E)Flame:Bird” (Owen, 45). ETS wants C as the correct answer. However, as Owen proceeds to illustrate, Magnetism, not magnet, is to iron as sunlight is to plant. Owen provides more examples such as how while iron also will attract a magnet, plants will not attract sunlight, or if you keep a magnet away from iron for a year, they will still attract, whereas if you keep a plant away from sunlight for a year, the plant will die, and will, therefore, not be attracted to the sunlight. According to Owen, B seems like a better answer.

“You can pick up a net with a hook, just as you can pick up a piece of iron with a magnet. You can also pick up a hook with a net, as you can a magnet with a piece of iron. Any net can be picked up with a hook, any hook can be picked up with a net; likewise with magnet and iron. You may have a net too big to pick up with a particular hook, or a hook to [sic] big to pick up with a particular net, but the same is true of magnet and iron. The relationship between hook and net remains true no matter how long you keep them apart. Hook and net are both inanimate and both are made of matter” (Owen, 46).

Interestingly enough, according to ETS’s statistics, roughly twenty percent of students answer this question correctly, which could easily be achieved if all students marked a random answer for that particular question (Owen, 47, footnote). If perfectly-formulated test questions can still only produce a moderate basis to judge a student’s aptitude for college, what can be said for an ill-thought-out test with only a moderate success rate?

Final Conclusions

To conclude, the question lingers: Are standardized tests capable of measuring anything more than how well one is able to pass that particular standardized test? Might it not make more sense to test the student using the same medium in which the student will be required to perform? After all, the test required for one to obtain their driver’s license is a test where they get in a car and actually drive. The United States Air Force has flight simulators at its disposal which provide a sufficient likeness to actually flying a plane to train the pilots-to-be. If the United States knows that people shouldn’t be able to fly a plane without passing a highly-accurate flight simulation test, or that people shouldn’t be able to drive without passing a nothing-could-be-more-accurate-than-actually-doing-what-you-are-being-tested-for driving test, why is it that the nation’s colleges are pretending that a simple standardized test could accurately convey enough information to predict how they will perform in a college setting without actually having been in a college setting. Of course, in this case, ETS is probably right in saying that the high-school performance is the most accurate predictor for the status of the student’s future collegiate life, which would follow because it is the closest thing to actually going to college without actually going to college. On top of all of this, the test-taker is required to wind his way through a maze of badly-written questions by poorly-trained question-writers. Here, the history of standardized testing which reaches as far back as four millennia ago to the present day has shown that it has come a long way and certainly has a long way to go. It also goes to show that, in general, things designed for a certain purpose usually perform best when used in the manner prescribed.

Works Cited

"About ETS." ETS: Educational Testing Service. Educational Testing Service. 7 Dec. 2008 <http://www.ets.org/portal/site/ets/menuitem.c988ba0e5dd572bada20bc47c3921509/?vgnextoid=ce60486a721c7110vgnvcm10000022f95190rcrd&vgnextchannel=4ab65784623f4010vgnvcm10000022f95190rcrd>.

Brooks, Samuel S. Improving Schooling. Cambridge, MA: Houghton Mifflin Company, 1922.

Friedman, S. M. "The Inflation Calculator." The Inflation Calculator. Westegg. 7 Dec. 2008 <http://www.westegg.com/inflation/infl.cgi>.

Kellaghan, Thomas, George F. Madaus, and Peter W. Airasian. The Effects of Standardized Testing. New York: Springer London, Limited, 1981.

Owen, David. None of the above: Behind the myth of scholastic aptitude. Boston, MA: Houghton Mifflin Company, 1985.

Standardized Testing Issues. Washington D.C., DC: National Education Association of the United States, 1977.

Tozer, Steven E., Guy Senese, and Paul C. Violas. School and Society: Historical and Contemporary Perspectives. 6th ed. New York, NY: David S. Patterson, 2009.

Wardrop, James L. Standardized Testing in the Schools: Uses and Roles. Monterey, CA: Brooks/Cole Company, 1976.

Zwick, Rebecca. Fair Game? : The Use of Standardized Tests in College, Graduate School, and Professional School Admissions Decisions. New York: Routledge, 2002.