The Problem With Probability

My elder brother Terry is responsible for a real epiphany moment in my understanding of mathematics. One day we were at home and he turned to me and said “I bet I can get 10 heads in a row”. Having an interest in mathematics, I knew the probability of this to be 2 to the power of 10 or 1 in 1,024. So, he had my interest. He did not do it but he did do something truly amazing. He could tell ahead of time when he was going to get a tail instead. So, he did accurately call the correct result of tossing a coin ten times in a row. He told me his secret: the result of tossing a coin depends on three things:

- Which way up the coin starts

- Whether the number of spins is even or odd

- Whether a person turns it over onto the other hand once it is caught

So knowing these cases, it is very easy to get a head to order. Either you start with the head up, do an odd number of spins and turn it over; you start with tails uppermost, do an even number of spins and turn it over; or you dispense with step three in which case you start with heads up and and even number of spins or tails and an odd number of spins. In other words, if you were in control of these circumstances, the probability of getting a head will always be 1 in 1.

And that was my epiphany.

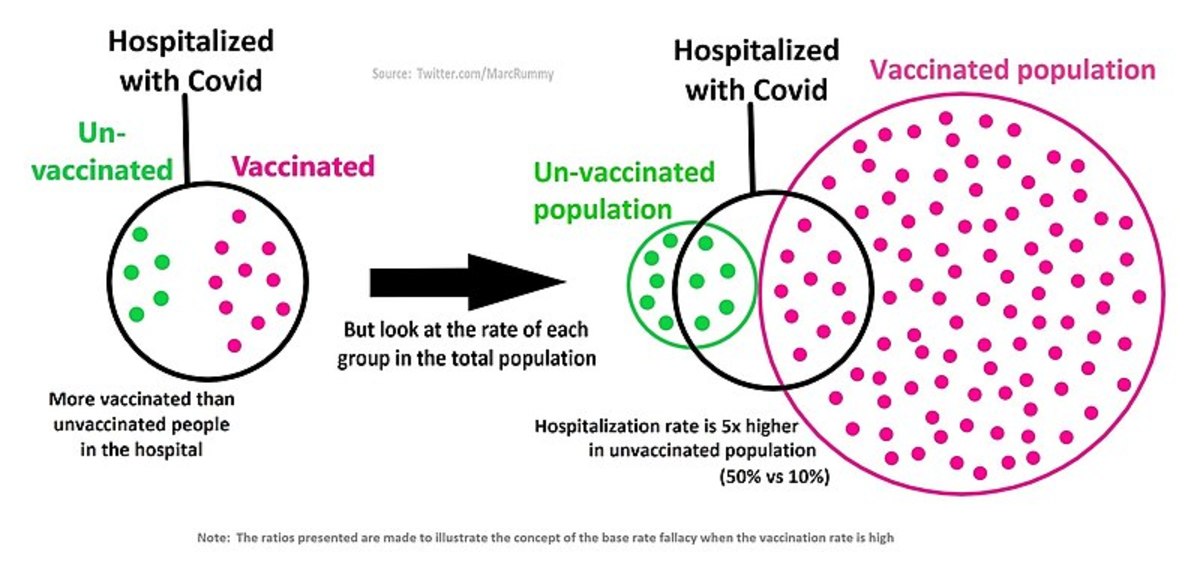

The result of tossing a coin was not a random event but an entirely predictable outcome if you knew or could influence those three things. It then became clear to me that all other events were the same and that probability was nothing more than a measure of how much was unknown about the outcome of an event. For example, the probability that a six-sided dice will land on a particular side is 1 in 6 if no thought is given to the circumstances of how you throw that dice. However, as soon as you start to study the means of throwing the dice and even controlling it, those probabilities would change drastically – some would increase and some would decrease. As you got better at it, the probability of getting a desired result would move closer to 1 in 1.

Of course this led to a real problem. As a tool, probability is really useful at finding out how much is unknown in a situation. You compare real data against the pure mathematical probability and the more skewed the results, the less is unknown. The problem comes in that probability underpins an awful lot of other mathematics which it has no right doing. For example, if my DNA is successfully matched with that found at a crime, a probability is given as to how likely that match would happen by accident eg 1 in a million. It is surmised that the smaller the probability, the greater the likelihood that you are the criminal. But does that probability mean anything? Has that probability ever been checked against real data to see if there is a skew? If it has not, then it is useless. And so any decision made upon it will also be useless.

Probability is still a vital and essential tool for highlighting the amount that is unknown about a repeatable event. Any other use is highly questionable