- HubPages»

- Technology»

- Computers & Software»

- Computer Science & Programming»

- Programming Languages

Artificial Intelligence: A Short History & Overview

Abstract:

Artificial intelligence is a fast growing, ever changing field. What research and accomplishments have been completed in this field so far? What are the possibilities and implications for the future? What should the average person know about A.I.? These questions and more can be answered through careful research and data analysis, including current and previous research, current and previous innovations in A.I. and a review of plans for the future of A.I. and its’ potential for day to day use. The Fact is that the idea of artificial intelligence has been around throughout history. People in general are fixated on the notion of making our lives easier through the help of intelligent machines and programs. In a time when new uses for A.I. are being created daily and in a time when six out of ten Americans owns at least one computer (Jenkins, 2010), it is certainly time for the general public to take interest in increasing their knowledge of artificial intelligence and how it can influence their lives.

By Myranda Grecinger

The accomplishments that have been completed in the artificial intelligence field so far are something that was once believed by most people to be only possible in fantasy. The idea has been around for centuries but has only become a reality in relatively recent years. Mankind continues to value the search for knowledge and technology that will assist us in our day to day activities and responsibilities in order to make our lives easier and more productive. The research of the past and present as well as current and previous innovations in A.I. continue to show what the possibilities and implications are for the future and how important it is that the average person work on increasing their knowledge of artificial intelligence and how it can influence their lives.

Artificial intelligence is constantly making us question what is within the realm of possibility and whether or not we have crossed some line of ethical or moral values. What research and accomplishments have been completed in this field so far? What are the possibilities and implications for the future? What should the average person know about A.I.? These questions and more can be answered through careful research and data analysis, including current and previous research, current and previous innovations in A.I. and a review of plans for the future of A.I. and its’ potential for day to day use. The Fact is that the idea of artificial intelligence has been around throughout history. People in general are fixated on the notion of making our lives easier through the help of intelligent machines and programs. In a time when new uses for A.I. are being created daily and according to a 2005 study conducted by hard drive manufacturer Seagate, seventy-six percent of Americans own at least one computer, (David Jenkins, 2010 ) it is imperative that the general public to take interest in artificial intelligence and take the time to learn more about it.

When discussing artificial intelligence, it is important to have at least a general understanding of what it is, and how it works and in order to understand that, we must first understand the meaning of the term. The term artificial intelligence generally refers to a machine or program that has been created by humans, performs a specific function or s group of specific functions and possesses the ability to simulate human behavior or logic in some way. The Turing test, a test created by Alan Turing, is often applied to new innovations in this field to determine their ability to “think” independently. This is a very broad term and somewhat open to interpretation, therefore there are actually several different branches of robotics, computers, and programming that may fall into an A.I. category.

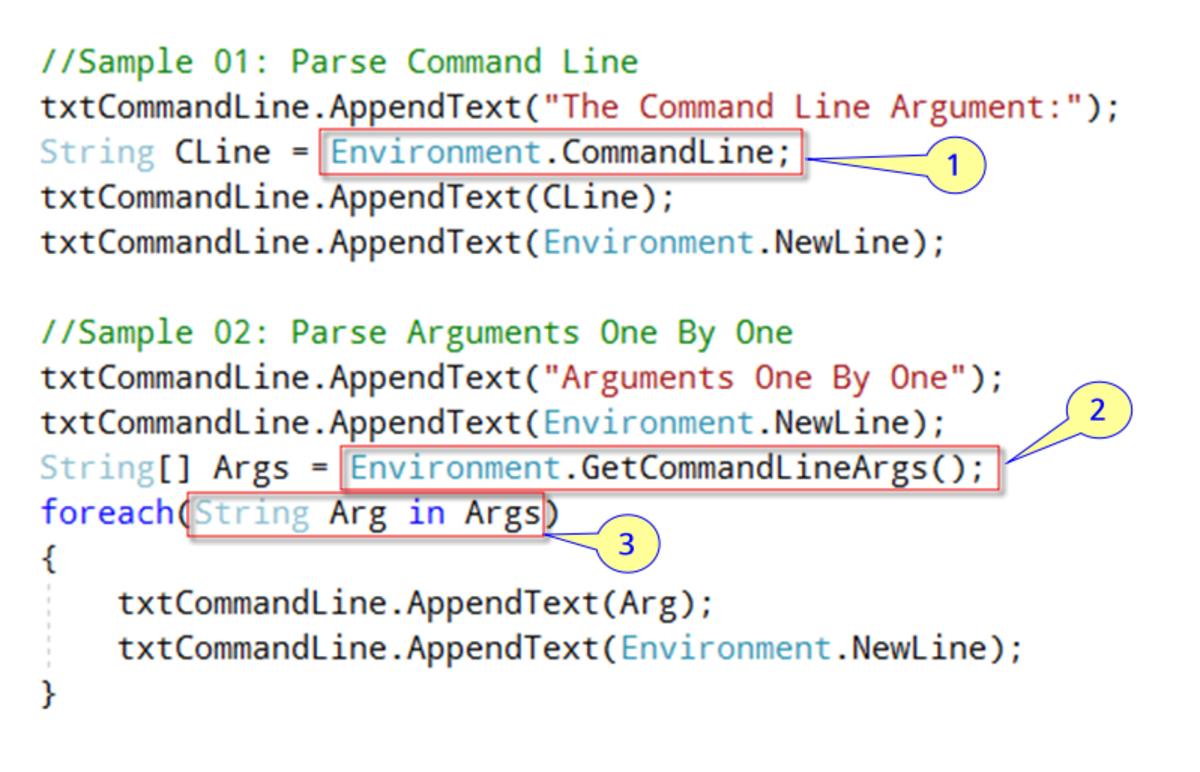

A.I works through a series of algorithms. Algorithms are defined in Collins English Dictionary as “a set of rules for solving a problem in a finite number of steps, as for finding the greatest common divisor or a logical arithmetical or computational procedure that if correctly applied ensures the solution of a problem”. This means that programmers must set a certain algorithm string to program the machine or program to solve certain problems or do or learn certain things. (World Book Online)

There are many types and branches of A.I. being worked with today, and I am certain many more to come, however there are several that it would be wise of people to have at least a basic knowledge of. These branches of artificial intelligence are necessary for the programs or machines that they control to understand their purposes. Machines do not think without human programming to do so, and because they are computer based must be commanded through a series of mathematical codes.

Fuzzy Logic is one type of A.I. that was initially researched in the US. It is a type of intelligence that allows a program or machine to make its’ own decisions (Zhe Tang, 2009). This branch of A.I. is currently being used in many everyday electronics such as self focusing cameras.(Thomas E. Sowell, 2008)

Logical A.I. refers to “what a program knows about the world” and how it will react to certain situations(John McCarthy, 2010). Evidence of this type of A.I. can be observed in many modern so called “smart toys” such as toy robots or electronic cars and trucks. These toys appear to “see” a wall and avoid it or hit run into an object placed in their path and then maneuver around it giving the child using the toy the impression that the toy is “smart”.

Many A.I. programs are built specifically to evaluate possibilities and calculate moves. Search is a type of A.I. that works towards the discovery of more efficient methods. ( John McCarthy, 2010) Search may be used in programs such as a poker odds calculator or a computerized strategy game.

Pattern recognition is used in things like vision programs and language (John McCarthy, 2010). Before a human learns to read they must first learn to recognize patterns, this is because language is a phonetic pattern, therefore in order to have a machine learn to read or translate, it must possess a solid pattern recognition foundation. Also to interpret what is being viewed a machine must possess pattern recognition because this aides in distinguishing shape and color variations.

Representation is a mathematically logical branch of A.I. that assists in the programming of facts about the world. This is when the programmer “teaches the machine how it is going to be presented with new material” and how it should interpret the new material. This process actually has to take place before other programs or the machine would have no baseline for understanding the programs (AAAI, 2007).

Inference simply allows the machine to draw conclusions based on the information (John McCarthy, 2010). This is currently prominent in A.I. medical diagnostic programs. The program or machine must take a group of clues and make its’ own decision on what they insinuate rather than adding up numbers to get an exact amount.

Learning from experience, Planning, and genetic programming are also part of A.I. and there are still much more but of all the A.I. branches, Common sense and reasoning is still the most difficult type of human intelligence to simulate (John McCarthy, 2010). The fact is that for a machine or program to understand common sense and reasoning, they must learn as we learn, from experience, and along with that be able to interpret information through all of these different branches and then some. Another thing needed for perfect common sense programs is emotion and although we can make a machine mimic the expression as thus far we have been unable to make them truly feel the emotion, and much of our common sense is built on emotion, for example a child wanders into the street and a loud truck squeals to a stop almost hitting him, the child is terrified and therefore learns that he should not go into the street, it is not because he was told not to, but rather he felt the best decision was not to this choice was based both on something he learned from his experience and on the emotion he felt during it. We are not quite to the human level of emulating that yet.

A.I. has a world of useful applications, the common person has probably had experience with a simple A.I. program and not even realized it. A game of checkers against the computer for example, this is a common place A.I. application that requires some type of strategy and odds calculating. Speech recognition software also requires a certain level of artificial intelligence, we often see this in today’s automobiles. Microsoft’s Natural reader text to voice software makes good use of the Natural language applications, it is very useful for reading web pages with poor eyesight. Heuristic classifications are applications that are involved every time a person swipes their debit card, heuristics is what helps the system decide whether or not to accept the card. There are so many applications out there that people are involved with everyday without ever taking note of the fact that they have just encountered A.I. at its’ finest.

Believe it or not there is actually a bit of ancient history involved in artificial intelligence today. Although many sources state that A.I. began with ancient Egyptian folklore, (Think Quest, 2010) the inability to provide a citation of exactly what this evidence is such as specifically what part of their folklore and where the proof is prevents this from being used as part of a credible history, but worthy of notation as part of the theoretical beginnings of the idea. A better example and perhaps the closest to what we think of as A.I. machines, of early man’s consideration is the Greek mythological character Talos. Considered to be the guardian of the island of Crete, Talos was the creation of Zeus, and was said to be made of precious metals such as bronze, and kept the island safe through a series of preprogrammed activities (Alena Trckova-Flamee, 2005). However, stories that involve the attempt to create machines that mimic life in the ancient world were not always folk lore.

The beginnings of the imitation of living intelligence began in ancient times. Leonardo da Vinci built a technologically advanced lion that could walk in 1509 and in 400 B.C., Archytas of Tarentum invented a wooden pigeon which was apparently capable of mechanical flight (Stefan Kanfer, 1986). The Greek philosopher Aristotle is often credited with the creation of “syllogistic logic”, the first deductive reasoning system for machines (George Boger). This evidence definitely displays civilizations interest in the theory of A.I. long before its’ official birth which has only occurred in the last century.

In 1936 mathematician Alan Turing invented a computing machine which is now referred to as the Turing machine opening door to the invention of the modern computer in the early 1940’s. This led scientists to begin earnestly entertaining the idea that the portal to the simulation of human intelligence had been opened, however the term “artificial intelligence” would not be coined until 1956 at a Dartmouth College conference. It was at this conference that a small group of researchers unveiled the first known computer programs involving logical reasoning (Doron Swade, 2011). In 1963 the United States government award a sizable grant to M.I.T. to promote research in the area of “Machine Aided Cognition” (Think Quest, 2005), by now the field and its’ potential had finally been recognized and was on its’ way to making the lines between science fiction and science a blur.

The 1970’s thru the early 1980’s brought many new and exciting innovations in the world of A.I. research. In the early 1970’s Marvin Minsky began work on “Frame Theory” which is a knowledge base that includes basically packaged information such as the word classroom would tell the computer those things that are related or involved such as students, books, etc. Prologue language which allowed the introduction of images involving shapes, texture and color was initiated in 1972 by David Marr. After those initial advancements the explosion of A.I. research was unstoppable. By the early 1980’s corporate America became involved and began searching for ways to use A.I. in their business practices. Systems like XCon, a system used to program Vax computers, were highly sought after as the demand for technology surged. Although the mid 1980’s saw severe losses and projects were abandoned, 1986 brought Hollywood’s fiction into the realm of reality when Honda unveiled its’ emerging research into humanoid robots.

Honda’s P2, the first bipedal self regulating robot was completed in 1996. This invention revolutionized and revived the idea of the everyday usefulness capabilities of machine aided cognition. In 1997 the company ambitiously moved forward as a leader in the field with the P3, which operated completely independently and was capable of unassisted walking (Honda, 2007). The door was now open to invite further research and improvements on the design in hopes that one day a perfected artificially intelligent humanoid helper robot would follow and make the lives of every person and every line of work more simplified.

ASIMO, an acronym which stands for advanced step in innovated mobility, is Honda’s latest bipedal robot which was completed in 2000 and is capable of walking on unsteady and uneven ground, even going up and down stairs of varying height and can respond to voice commands as well as avoid obstacles in its' path. ASIMO was invented with the purpose of human assistance in mind. This design leans particularly towards the elderly and disabled. Honda is adamant that however human like it may seem the robot is a machine and should be referred to as “IT” or “ASIMO” and not with the pronouns “he” or “she”, therefore it is a safe assumption that Honda has no intention of “humanizing its’ robots (Honda, 2005). This idea of keeping the bold distinction between machine and human seems to be in complete contrast with many other A.I. research facilities and projects today.

Many A.I. innovations actually aim to humanize machines and programs. A prime example of program humanizations would be chat bots. T.A.R.A is a chat bot created by Itherapy which is owned by Isdera Corp. whose mission is to provide a private environment where embarrassing actions and events are conducted in private. T.A.R.A was invented as a method of pre-treating people who may need therapy. She appears on the screen as an animated face whose eyes move to follow your cursor and whose lips move as she speaks. She is capable of replying to questions, carrying on a conversation, offering advice, and administering tests. T.A.R.A gets more intelligent everyday and creates her own list of questions that she feels were answered poorly so that her programmers can give her a better idea of how to respond (Itherapy, 2007).

A new project is currently under way in Japan that is displaying exactly how far the humanistic traits of artificially intelligent machines have come. Two years ago CB2 was just like every other infant, he had soft skin, a bald head, arms and legs which he had very little control over, his chest and shoulders rose and fell with each breath, his large eyes followed movement around him, and he was slowly learning to memorize and mimic the facial expressions and sounds of the who cared for him. There were a few differences between CB2 and other boys his age though, most notably, CB2 is a robot. He has silicon skin filled with sensors that allow him to respond to touch and his android brain has been programmed to learn like a regular child. Two years later, CB2 has taught himself to walk and is beginning to string words together which is just what we would expect from any two year old boy. His creator, Professor Minoru Asada, hopes that CB2 will lead the way to the creation of a new robot species with learning abilities somewhere between those of human and other primates. Asada goes on to explain that by the year 2050 he would like to see a robotic team of football players taking on the human world champions (Miwa Suzuki, 2009)

Artificially intelligent inventions have been unveiled around the world in the last few years. Science has seen the creation of automated receptionists, robotic security guards, and most recently in the news South Korea and Japan have both begun implementing A.I. school teachers. Robots are not the only artificially intelligent creations worth noting though. Many gaming programs contain A.I. software; including a program know as Deep Blue, which became famous in 1997 for winning a chess match against the reigning champion.

The medical field has also been revolutionized thru A.I.. On February, third of this year, science daily ran an article describing how surgeons of the future will be able to use robotic nurses that will recognize hand gestures. Juan Pablo Wachs, an assistant professor of industrial engineering at Purdue University explains that the research and developing technology emerging in this area will assist in the potential for human infection as well as shortening the length of surgery (Juan Pablo Wachs, 2011).

The US military began using A.I. technology during Operation Desert Storm for use in missile systems and surveillance (World Book online). A.I. is now being used in many different ways including training and test simulators, flight plan creations, even in language and cultural studies. A.I. systems have become a valued part of military strategy and have undoubtedly saved been a contributor to saving lives in the field. (AAAI, 2001)

There is one other project that no good A.I. discussion should be without, Web Bot. Web Bot has been the buzz of cyber space for nearly ten years, to some, it is all sci-fi rumors and malarkey, while to others it is the technological equivalent to some of the most renown prophets of all time. Created in 1997, Web Bot’s primary mission was to send out spiders through the internet just like a search engine and collect data to assist in the prediction of stock market trends, but some believe that Web Bot tapped into something of far more importance. Web Bot is said to have begun collecting information from the collective ideas of the world via the World Wide Web and predicting major events and catastrophes such as the September 11th attacks on the world trade center. Currently such facts are unproven, but with the track record of A.I. thus far, given a little more time perhaps even we skeptics will be convinced of its’ supposed abilities. (John Danz Jr., 2010)

The fact is that there is no telling how far Artificial intelligence research will come, or how quickly. The search for a way to mimic life and intelligence in one form or another will continue as long as we populate this planet because the fact is that we as a species like to find ways to make things easier on ourselves almost as much as we like to pretend that we are superior beings among all others. There are still some questions left to be answered, however. The questions regarding ethics and morality require attention. Where do we draw the line and say that is enough? Simulating the human body could mean better prosthetics for amputees, but should we do it so well that it eventually becomes difficult to tell the robots from the humans? What place in the world does a machine that starts out as an infant and learns and progresses with age have? If and when it ever reaches adult level consciousness and begins thinking about thinking does it then earn the same rights given to all people? These are tough questions, there are no simple answers, but they are no longer questions that we could never fathom being faced with, just ask the creators of CB2.

Artificial intelligence is a constantly changing field that has consistently made leaps and bounds in the realm of possibility. The innovations that have been completed in this field were the stuff great movies were made of, think A.I., Bicentennial Man, Irobot, and who could forget Minority Report? This idea has been a part of human consciousness from the traceable beginnings of civilization. Mankind will continues to search out new ways to make our lives easier, but at what cost? What happens when a robotic teacher and a teacher’s assistant for every classroom becomes cheaper and more efficient than a physical person, after all, robots do not join unions or fraternize with their students. What happens to all of the young men and women in nursing school today when all their hopes and dreams of working in surgery go up n smoke since their local hospital implemented A.I. surgical assistance? The list goes on and on, but considering the damages has never been sciences strong suite.

We must value the search for knowledge and technology that will assist us in our day to day activities and responsibilities in order to make our lives easier and more productive. The research conducted today and yesterday could be the undoing of tomorrow. What the possibilities and implications are for the future will determine on our actions from this point on. Will the responsibility to human kind be too much for us to bear in the face of all of this new technology, or will we focus on the original intended purpose, machines are created to assist us in our day to day activities and responsibilities in order to make our lives easier and more productive. The importance in average person increasing their knowledge of artificial intelligence and how it can influence their lives continues to become ever more evident day by day.

References

Boger, G. (n.d.). Retrieved January 30, 2010,

Modernity of Aristotle’s Logical Investigations.

Retrieved from http://www.bu.edu

Honda (2007, December).

ASIMO.

Retrieved from http://asimo.honda.com

Jenkins, D. (2010, August 2).

Survey Reveals PC Ownership Figures [Article].

Retrieved from http://www.gamasutra.com

Kanfer, S. (1986, December 22).

Living: In All Seasons, Toys Are Us.

Retrieved from http://www.time.com

Litman, D. (n.d.). Retrieved February 11, 2011

Artificial intelligence.

Retrieved from http://www.worldbookonline.com

McCarthy, J. (2008, September).

CONCEPTS OF LOGICAL AI (Stanford University, Ed.).

Retrieved from Computer Science Department http://www-formal.stanford.edu

Sowell, T. E. (2008, September).

Fuzzy Logic for "Just Plain Folks" [Online Tutorial].

Retrieved from http://www.fuzzy-logic.com

Suzuki, M. (2009, April 4).

Japan child robot mimics infant learning. Breitbart AFP News.

Retrieved from http://www.breitbart.com

Thinkquest. (1997, June 21).

An Introduction to the Science of Artificial Intelligence.

Retrieved from http://library.thinkquest.org

Trckova-Flamee, A. (2005, November 19).

Talos [Article].

Retrieved from http://www.pantheon.org

Wachs, J. P., Kölsch, M., Stern, H., & Edan, Y. (2011, February).

Future Surgeons May Use Robotic Nurse. Science Daily.

Retrieved from http://www.sciencedaily.com

© 2011 Myranda Grecinger