Application Modernization & Rationalization Strategy for Investment Bank IT Division

Are you an IT Head, working for a reputed international bank?

Are you tired of maintaining multiple trade applications where a few business functions might be common across all but are still coded exclusively for each?

Are you facing challenges in providing high performance trading infrastructure to the bank?

Are you maintaining legacy technology and fear the growing cost burden and scarcity of skilled resources?

Are you looking for ways to modernize infrastructure?

Well! I can guarantee that you are not the only one amongst the lot. The questions are real nightmare for some and with the sky rocketing competition in the investment banking industry, the demand for a high performance trading infrastructure is growing day by day. It is one of the many causes of stress, for investment banking IT Heads, in the recent times.

Let us put some facts together and face them.

- A large bank today has between 100 - 150 trade and other related applications spread across multiple shores.

- This huge number is caused by unfocussed IT policies in the past that gave rise to unmanaged demand coming from different sub-groups within the investment bank, for exclusive set of trade applications meeting their needs. Trading activities for different asset classes are typically managed by these different sub-groups. In the past, these sub-groups did not try to consider the portions of the business functionality that could have been borrowed from existing applications and that some of the fresh functionality they are building could be shared with others. This caused a lot of duplication over time that exists till date.

- Almost 25% - 50 % of the features needed by trade applications are common across asset classes and since each sub-group has exclusive set of trade applications for them, cost of maintaining duplication, across investment bank, is huge.

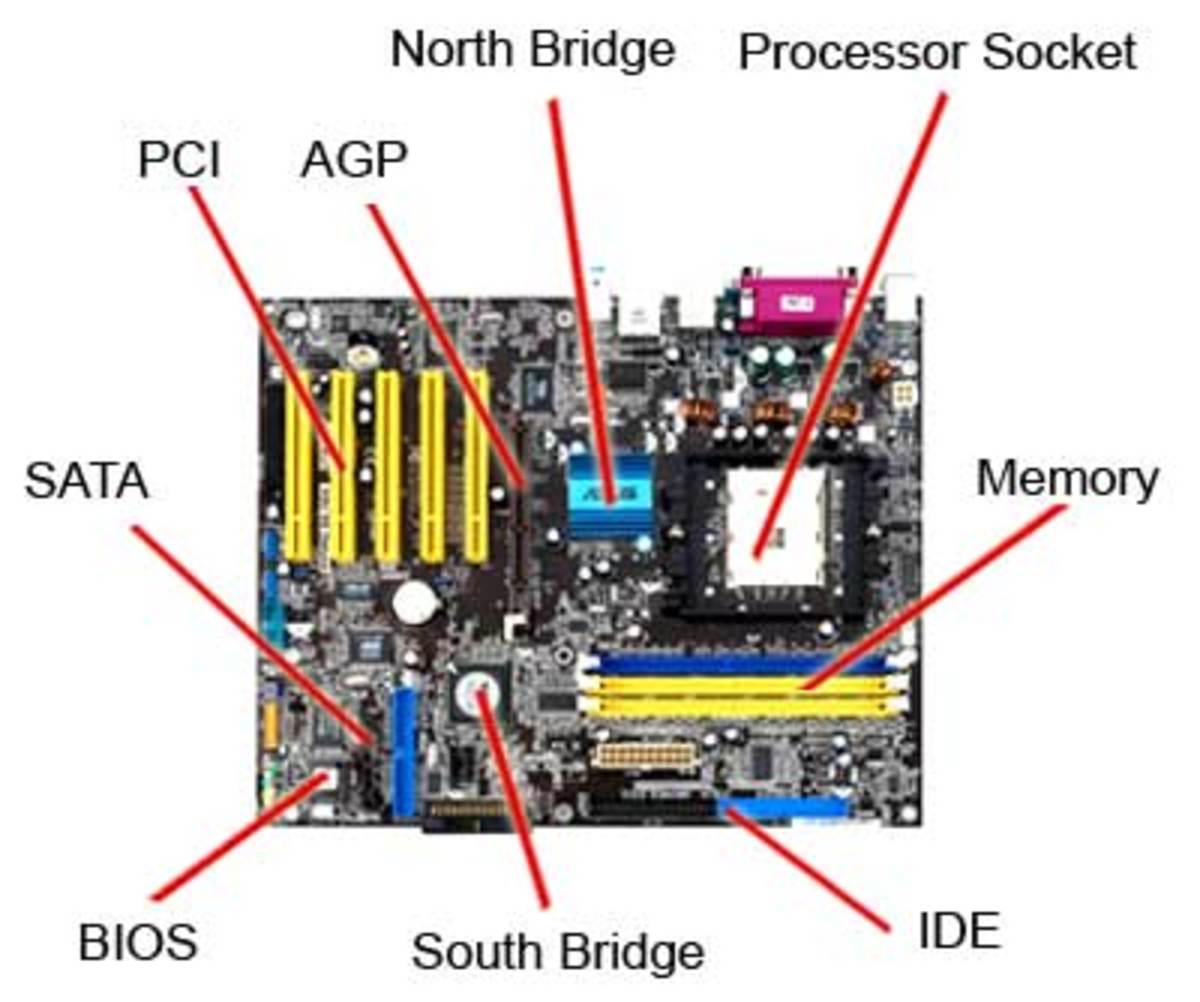

- Another disaster that happened due to absence of focused IT policy in the past is that different groups used different technology platforms for their applications and since these disparate technologies do not directly connect with each other, it takes extra investment to make them talk. Some of these technologies are in legacy category today, like, Cobol - DB2, Natural - Adabas, etc.

- These applications may or may not be connected in an optimal way, thus, causing additional trade latencies.

- Industry estimates that the cost and risk of maintaining legacy will be intolerable by 2015 due to the following reasons.

- Skilled legacy resources are becoming an increasingly rare commodity.

- Legacy people need to be paid more to keep them motivated to retain their skill levels. In some cases, they get skill allowances that are five times as compared to a Java / .Net developer of today's age.

- Legacy provider companies are no longer ready to support older versions of their products and are notifying users of the maximum time frame until which any specific version would be supported. Also, they are charging very heavy to support legacy.

- The performance demand for these trade applications can range between 20 - 600 trades per second per application which translates to 1.7 million - 50 million trades per day so the average is around 25 million trades a day per application. Clearly with 100 such applications in place, we are looking at an infrastructure that is able to support 2.5 billion trades a day without shaking. Scary!!! Isn't it?

- The legacy modernization has its own high costs and risks. To share the experience of most, the cost is around the same as getting the application developed a fresh and the risk is to loose ‘bread & butter' business logic in the delivered migrated / converted code. Later has more negative impact as compared to the former.

- Many modernization companies claim to have automated tools and techniques for line by line conversion that as per them bring the cost down to 60% of the development cost and cut the risk of business logic miss out too in the final delivery. But, we need to carefully consider this approach.

So the list above boils down to the following simple question but a hard one to answer.

How do we modernize bank's trade infrastructure to current age, within cost and time budget, that supports at least 2.5 billion trades a day?

Targets would be as follows.

- Retiring legacy in a phased and cost effective manner with the least possible risk.

- Deciding the target technology and infrastructure that will take legacy technology's place.

- Deciding the tools and techniques for application migration.

- Deciding upon whether to perform legacy modernization, in-house, or outsource it.

- Deciding upon the set of vendors who carry the correct tools & skills to modernize legacy applications.

- Rationalizing applications by removing duplication of functionality. We need to reach to a state where reusable functions / services across all applications are identified and all applications are re-engineered to call these re-usable pieces.

- Making infrastructure strong enough to support, roughly, 2.5 billion trades a day.

Following is my take on how above can be achieved in a planned way.

With 50% - 70 % of bank's IT investment still into legacy technologies, the ideal time frame for completing rationalized modernization of applications would be around 6-10 years. It needs to be made sure that most of business critical functionality is out of legacy technology between 2015 and 2020 (modernization) and also that it exists in re-usable form (rationalization).

Start with categorizing the applications within investment bank as follows

- By revenues they generate. A measure of how dear are they to business and hence more impacting to the decision.

- By application complexity, as it is one of the guides to roughly estimate the time frame to migrate or rationalize. The complexity calculation would take into account the number of function points (for e.g., count of screens.), how many other applications it connects to, programming language used, business logic etc.

- By number and types of users for each application. This would give a rough estimate of user re-training costs involved.

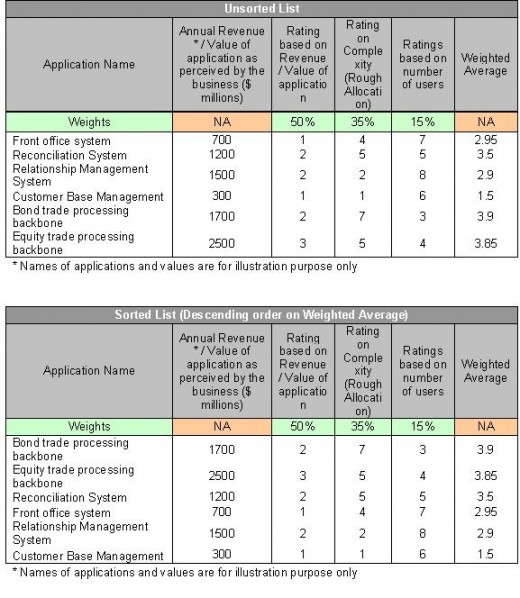

Decide on a strategy to prioritize the list of applications based on above parameters. I would suggest rating each application on a scale of 1 to 10 on above parameters and then taking a weighted average for each. Sort the resulting list in descending order based on calculated weighted averages.

Prioritization based on above would allow money to be spent in correct sequence, thus, enabling better budgeting. The weights, I would give to above parameters would be, Revenue Generation / Business Impact - 50%, Complexity - 35%, Number & types of users - 15%.

Example below should suffice.

The first one on the list after sorting would be the first target for rationalization / modernization.

Next, it would be helpful to form two groups. First one will focus on rationalization of already modern, recent applications and the other one will focus on modernization / migration of legacy ones.

The list worked out above would be distributed among these two groups working in parallel. This would help in many ways. First, since rationalization and modernization each requires different skills and competencies, each group can focus in a better way and hence can become more productive. Second advantage that comes out of parallelism is the reduced time frame for completion of entire list.

Key things to consider for these two groups are

Rationalization Group

Best tools and techniques to identify reusable functionality across multiple applications.

Suggestion would be to form a sub group of business people / analysts within Rationalization group who would communicate and coordinate among themselves and with business groups, to identify business functions used within each application. They will then identify functions that are common across many applications. For example, a trade blotter could be a common piece needed across multiple trade applications.

Business analysts will then couple with technicians in the group to arrive at a more finely grained list that contains technical components that are needed to be extracted and hosted as individualized services. For eg, a blotter screen could be hosted as a shared resource that many applications can re-use.

The individualized services would then need to be made runtime customizable according to the needs of the calling applications. A simple example could be that a bond trade application may want to show yield column in the blotter but an equity trade application doesn't want to show it as it might not make sense for it to do so.

The run time customization requires the service to be written in a way that customization parameters are accepted as input parameters along with other business data at the time of service call. If the service is designed to maintain a session per calling application then the customization parameters are required only during the first call and then later re-used for the subsequent calls.

Hey! Are we not talking about Service Oriented Architecture (SOA) here? Well to a large extent, yes. It helps cutting down duplication costs drastically.

Rewrite applications to call these individualized services instead of having them coded within applications. The individualized calls will cause some extra latency and will disturb transaction boundaries as well but caching and distributed transaction features stitched within SOA implementation can help fighting it.

Distributed transactions are actually orthogonal to performance because it slows down overall response times for users due to the time taken in handshaking between all parties involved in the transaction. The best way would then be to supplement the applications and services by designing the counter transactions for each transaction and have the transactional features implemented in an asynchronous way.

A transaction within an application or service would then be allowed to be finished without waiting for finish signals from other involved parties and if one of the involved parties notifies later, asynchronously, about its failure then the application or service would run counter transaction to counter the affects of earlier transaction done.

Each application or service that has received failure notification would need to follow the same steps. The advantage here is that we would achieve high performance with transactions as we don't have to wait for other parties but the down side is additional cost in implementing counter transactions. Also, counter transactions based design may not be suitable for all scenarios. It is suitable for cases where money or security account overdrafts are allowed.

Services would need to be wrapped within proper authentication and authorization layers. The latencies caused by these can't be avoided in my opinion. The affect of these latencies, however, can be minimized by having deployment on parallel processing infrastructure as explained later in this article.

The interface points with other applications would similarly need to be converted to temporary re-usable services. At the end of rationalization of all applications, these temporary services would seize to exist as all applications would be calling services, either synchronously or asynchronously. Applications would not be calling other applications in this model. So, no EAI (Enterprise Application Integration) solutions are expected in the final model.

Decide upon the best infrastructure and architecture to host these re-usable business functions / services

Architecture, as we figured out already, is suggested to be the SOA. Some people advocate "Webservices based SOA" but I feel when performance expectations are so high, and when Webservices based implementations are known to have high latency, then it is not suitable for building trade services. In my opinion, Webservices are needed only in B2B scenario. Within the investment bank, I see the need for an approach where messaging between services is done in a flat message structure format that takes less time to marshal and un-marshal. Avoid XML where it is possible. Although a lot of trade message structures seem to be standardized as XML, e.g. FPML, but I still recommend avoiding it unless we are in need to talk to 3rd parties. Believe me, it is the key to building fast responding services. We should build additional services for 3rd party interfaces that can convert to and from internal flat message structures to standard XML formats.

The deployment of trade services should always be on a parallel processing infrastructure to match the speeds of mainframe. Grid computing is recommended here. Grid computing can greatly improve processing times by distributing the complex trade processing logic across multiple grid nodes and then aggregating the partial results, later.

Modernization Group

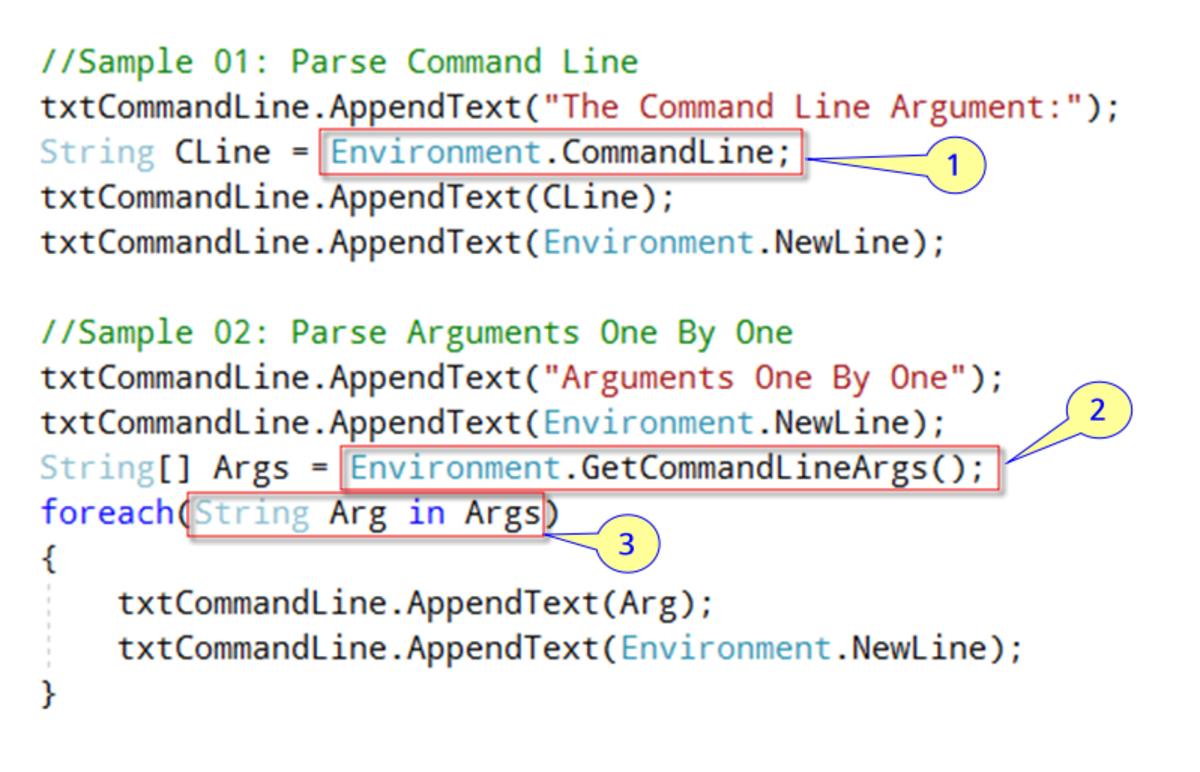

Preferred technology platform where we would like the legacy to migrate to.

J2EE and .Net both qualify for this as they both are enterprise grade technologies. I would prefer Java due to its ‘Write once and run anywhere' flavor. With hotspot JIT compilation enabled JVM(s), the Java performance is almost at par with .Net.

Whether to go for line by line conversion or go for object oriented re-design of legacy applications?

Suggestion is to choose a vendor that offers both, line by line conversion tools as well as design re-engineering to object oriented form. As of date, we have shortage of such vendors and they may well be less that the total number of fingers in our hands.

Line by line conversion tools will reduce the risk of loss of precious business logic while object oriented design will make the migrated application more maintainable.

I would not recommend doing modernization in house but rather to outsource it to vendors and the reason is that it will take a long time to develop modernization competency within house and we don't seem to have that much time in hand.

Whether to go ahead and rationalize a modernized legacy application?

Most certainly! It is highly recommended. In fact, the rationalization should be attempted while design re-engineering of a legacy application. Business analysts and technicians from Rationalization group can help Modernization group on this by keeping them informed on the available common services to re-use, best practices, etc.

What about deployment platform and architecture?

Again, Grid is the ideal platform to host modernized application or the modernized and subsequently rationalized services. We can hope to achieve 2.5 billion trades a day only on a grid in my opinion. Grid can help cutting a lot of latencies by employing parallel processing techniques.

Above should surely bring about a balanced change in the IT scene within your investment bank and will provide a solid, high performance infrastructure back bone to your business.