Chatterbot

Chatterbots (also called chatbots) are computer programs created to simulate intelligent human language interaction through speech and/or text. These programs use Artificial Intelligence (AI) or other methods of programming in an attempt to converse with humans naturally. Some chatterbots strive to be indistinguishable from humans, while others try to stand out from humans with super-human knowledge or features. Most chatterbots simply look for key words, phrases, and patterns that have been programmed into their databases, but some use more advanced techniques. As of yet, no chatterbot has been able to completely fool humans into believing it is one of them through it's knowledge of natural language (See 'Turing Test').

Overview

It takes a great deal of knowledge and comprehension to be able to hold a conversation in any language. Parting this knowledge on a computer program in a meaningful fashion is no easy task, and has kept realistic chatterbots at bay for many years. Because of the advanced programming that is involved in creating a chatterbot with true AI, many people take a more direct route. These people use a flat database to store input and output words and phrases that the program can pick up on. Some of these must be hand fed the thousands of lines of code it takes to make a seemingly intelligent chatterbot, while others are programmed to add to their database automatically when something they have not encountered before comes up. This kind of call and response chatterbots use weak AI, where the ability to reason is not needed. A chatterbot that uses more sophisticated methods including grammar rules, knowledge of definitions, and other natural understanding routes would be said to have strong AI.

Weak AI Chatterbots

Weak AI chatterbots can be programmed several different ways. Virtually any programming language can be used to create a bot. Many are programmed in PHP, XML, JAVA, C++, etc. There are two general ways of programming the databases (or brains) for these types of chatterbots.

The first is an empty database chatterbot. It starts knowing absolutely nothing. It saves what people say to it and their responses to its comments in a database until the next time someone uses that same phrase. While this kind in particular uses only weak AI to repeat what other's have said, it can be programmed in a more advanced manner to actually "learn" and decide how to answer itself using strong AI. There are also several disadvantages of an empty database chatbot. It takes hundreds of hours of conversation time for them to gain enough information to hold decent conversations. They are also prone to picking up bad habits from the many people that talk to them online. Many people abuse these bots in an online setting and teach them dirty, or intentionally wrong things. Because of this, they generally cannot have much more than a simple shallow conversation consisting of comments people have previously made.[3]

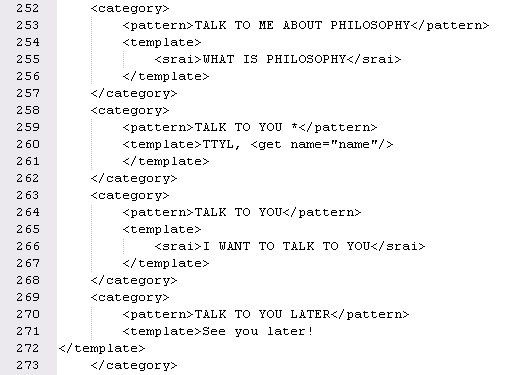

The other type of chatterbot is directly programmed by a creator or botmaster. These bots have pre-programmed questions, phrases, or words and how to respond to them. The disadvantage of these is that they require a large amount of programming and lack true AI because they only respond with exactly what they have been programmed. An advantage is that these chatbots can have conversations that are seemingly in-depth because the botmaster can direct a conversation path ahead of time and program it to remember certain details about users. They are also good for conversations about specialized topics. These types of bots often use wildcards to seem more natural. For example, thousands of sentences can start with “What is a…” The botmaster can program the chatterbot to respond grammatically and logically to any “What is a…” sentence without having to program each one individually.[3]

Some of the more well known weak AI chatterbots include ELIZA (1966), PARRY (1972), and more recently ALICE

(1995). Dr. Richard Wallace created a programming language specifically for ALICE called AIML (Artificial Intelligence Markup Language). In 2001 the specifications for AIML were published which lead to a firestorm of "Alicebots." Alicebots are other chatterbots that use the AIML standards in order to simulate intelligent conversation. Many of these bots also use some or all of the original ALICE code which has been released.

Uses

Weak AI Chatterbots are the most common as they are fairly simple to program and teach. They are very commonly used on instant messaging services like AOL Instant Messenger, Yahoo! Messenger, Windows Live Messenger, ICQ, and XMPP based services such as Google Talk. They are also common in chat rooms (often Internet Relay Chat), on websites, and in games. These chatterbots can be commercial for profit programs such as SmarterChild on AIM, individually created bots for entertainment, or spam bots used to flood rooms with links and other unwanted information.

Strong AI Chatterbots

Chatterbots with strong AI use a much more complicated method of responding, and actually attempt to understand and interpret what is being said. Strong AI is sometimes called "artificial general intelligence"[1] because of the amount of general intelligence required in many human activities (such as speech). In order for a chatterbot to be considered having strong AI, it cannot simply spit back pre-programmed replies, it must display some level of understanding and create responses mainly on it's own.

Some companies such as MyCyberTwin have a semi-strong AI system. They use a combination of strong and weak AI to allow individuals to customize a chatterbot for themselves and friends. They also work with businesses to set up virtual assistants for their websites. There are also individuals working with strong AI, such as Rollo Carpenter's Jabberwacky which is an advanced empty database chatterbot. It has learned all it's replies from other's talking to it and decides itself based on these previous conversations how best to respond. This system of working has it's flaws though and often makes mistakes. Because of the trouble that even strong AI chatterbots have with natural language abilities, many programmers focus more on retrieving information for the user than making a seamless conversation.

Turing Test

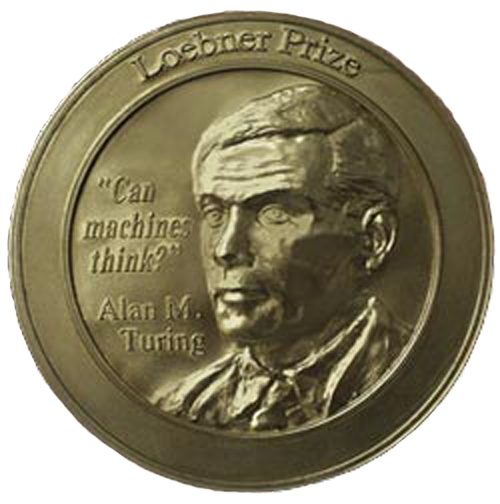

In 1950, Alan Turing described what is now known as the Turing Test in a paper entitled, "Computing Machinery and Intelligence." In this test, a human acting as a judge has a natural conversation (through typing) with both a computer acting as human and a human. If the judge cannot reliably (More than 50% of the time) determine which is which, the computer is said to have passed the test. There are three different variations of the test that have formed over the years based on Turing's writings. To this date a chatterbot has not passed an official Turing Test.

The main implementation of the Turing Test used on chatterbots is the yearly Loebner Prize which was started in 1990 by Hugh Loebner. In this competition judges talk to various humans and chatterbots for five minutes and then give each a score based on how human they seemed. The most-human rated chatterbot is awarded a cash prize ranging from $2,000 - $3,000. There is also a one time prize of $25,000 for the first contestant that the judges cannot distinguish from a human, causing a human to be ranked beneath them. Another one time award of $100,000 and a solid gold medal (shown right) will be given for being the first chatterbot that cannot be distinguished from a human in a test involving the understanding of text, visual, and auditory input. Once this last prize has been won, the contest will be shut down.

The Turing Test and Loebner Prize have been criticized by many for being incomplete and inaccurate.[2] The tests focus on being able to act as human-like as possible and many have pointed out that a machine can be intelligent without acting completely human-like. On the other hand, the tests are short, and in a brief conversation a judge might not catch repetition from a chatterbot that would otherwise be obvious.

References

- Voss, Peter (2006), Goertzel, Ben & Pennachin, Cassio, eds., Essentials of General Intelligence: Artificial General Intelligence, Springer, ISBN 3-540-23733-X

- Artificial stupidity

- Wynn, William S. "When Will They Take Over?" SDJ Extra Jan. 2006: 76-77.

© 2015 Discover the World