- HubPages»

- Technology»

- Computers & Software»

- Computer Hardware

Computer Display devices guide

Display devices

Computer display devices or monitors as we normally call them, have two main categories. The first and the most widely recognized is the cathode ray tube (CRT) . This is the type of monitor that you now wonder why it takes up so much space when you can buy cheap now the second type, which is liquid crystal display (LCD). Both types devices are supported by a video display unit (VDU) adapter or integrated video controller inside your PC. There are different types of video technologies, with each offering levels of screen resolution and color depth. The Resolution of a monitor refers to the number of horizontal and vertical pixels that the monitor displays. A resolution of 800 × 600 uses 800 horizontal and 600 vertical pixels to draw the text or images on the screen. Color depth refers to the number of colors the monitor is able to display. You might think that everyone has the latest monitor but not so. There are companies that have servers that all they need to use is an old monochrome monitor to occasionally look at their server details.

CRT monitor

The cathode ray tube (CRT) monitor was the most commonly used display device for desktop computers for many years. They contain an electron gun that fires electrons into the back of the monitor screen, which has been coated with a special chemical called phosphors. The screen glows at the parts where the electrons strike. The beam of electrons scan the back of the screen from left to right and from the top to bottom to create the images on screen. A CRT monitor’s image quality is measured by the dot pitch and also the refresh rate. The dot pitch indicates the shortest distance between two dots of the same color on screen. Lower dot pitch means a better image quality. Most of the Average monitors have a dot pitch of 0.28 mm. The refresh rate and sometimes called the vertical scan frequency, indicates how many times in one second the scanning beam can create an the image on the monitor screen. The refresh rate for most monitors varies from 60 to 85 Hz.

LCD monitor

LCD monitors are have taken over in the desktop computer area,replacing the larger, and bulkier CRT. This has been due larger to the amount of laptops that are being made, bringing the cost of the technology down in price. There are specifically 2 different types of LCD monitors.

Active Matrix

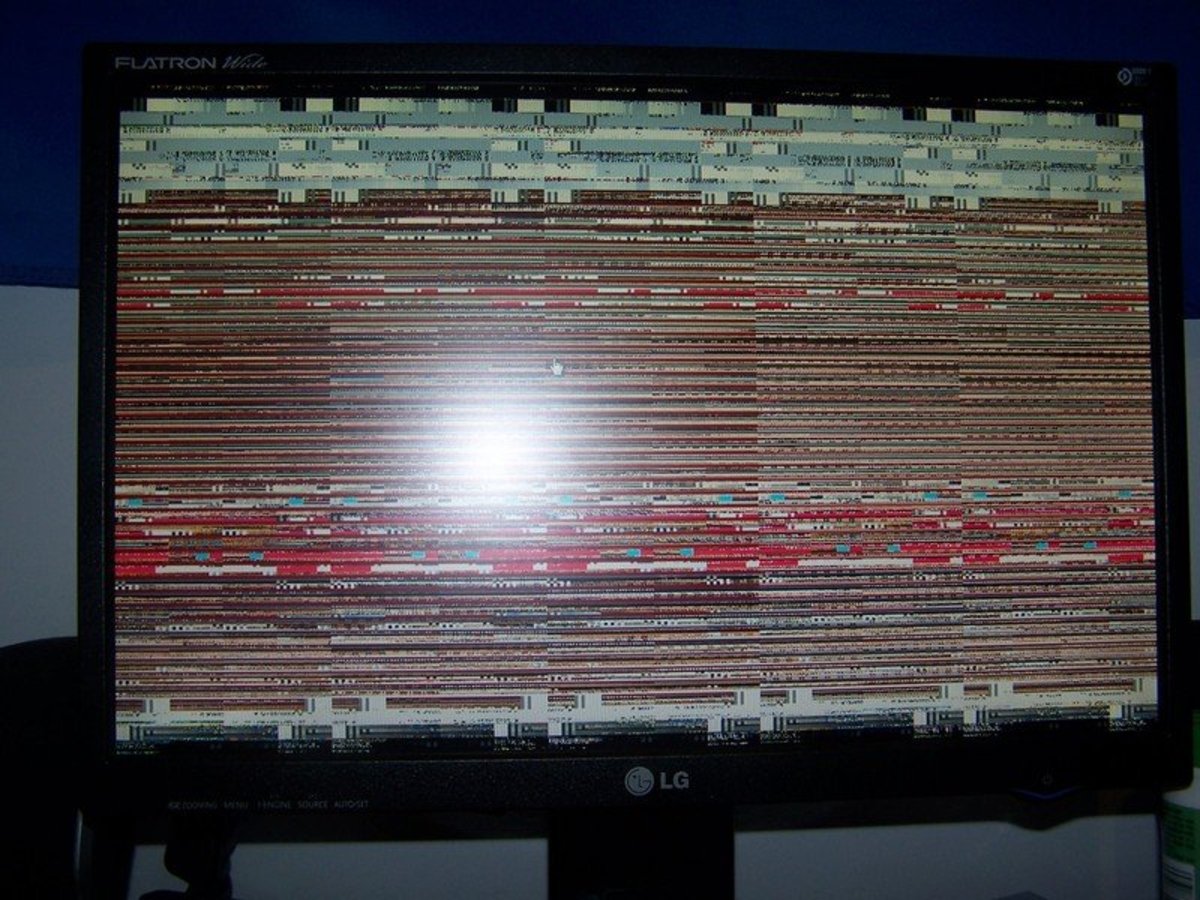

The Active Matrix LCD screen use Thin Film Transistor (TFT) technology to display on the screen. It is made up of a matrix of several pixels. Each of the pixels has a thin transistor, which is then used to align the pixel and switch its colour. Active matrix LCDs offer great response times and a good screen resolution. Active matrix LCD produce a crisp picture quality. The disadvantage is that they have a large power consumption, and for laptops this can be a problem.

Passive Matrix

A Passive Matrix LCD screen uses a basic grid to supply charge to each particular pixel in the display. Passive matrix LCD screens offer a lower screen resolutions, a slower response time, and poorer image quality than do the active matrix LCD screens. However, they are widely used as they are cheap, and can do the job that most people need.

Monochrome

The earliest video technology for PC's used monochrome or black and white video displays. The early monochrome technology had a maximum screen resolution of 720 × 350 pixels. When the monochrome technology was released it was unable to display graphics. Then the Hercules Graphics Card (HGC) was used to display the graphics, and IT managers could once again play pacman . HGC is used in two separate modes. There is a text mode for text, and graphics mode for the images. A Monochrome monitor uses a DB-9 D-sub connector that has nine pins arranged in two rows.

Color Graphics Adapter (CGA)

IBM was first to sell the CGA video technology. It could be uses on a screen resolution of 640 × 200 pixels and had 2 colours. One of the colours was black. With the four colors, the resolution dropped to 320 × 200 pixels.

Enhanced Graphics Adapter cable

Enhanced Graphics Adapter (EGA)

IBM then introduced the CGA technology to overcome this colour limit. It was called EGA video technology. EGA was able to display a whopping 16 colors at a screen resolution of 640 × 350 or 320 × 200.

vga cables

Video Graphics Array (VGA)

The VGA display technology used 256 KB of on-board video memory and was able to display 16 colors at a 640 × 480 resolution or 256 colors with a 320 × 200 resolution. The difference between earlier technology and VGA technology is that VGA used an analog signal instead of the previous digital signals for the video output. It wasn't so much a step backward, but more of a way to bring the price down for monitors which obviously then fueled the growth in home pc's. A VGA uses an HDB-15 D-sub connector with 15 pins arranged in 3 rows

Super Video Graphics Array (SVGA)

The Video Electronics Standards Association (VESA) setup to try to create specific standards in the industry, developed the SVGA video technology. The SVGA initially supported screen resolutions of 800 × 600 pixels with 16 colors, and new developments made the SVGA display 1024 × 768 resolution with 256 colors. SVGA also uses an HDB-15 D-sub connector with 15 pins arranged in 3 rows.

Extended Graphics Adapter (XGA)

XGA is another type of video technology development by IBM that used the Micro Channel Architecture (MCA) expansion board which was located on the motherboard instead of a standard ISA or EISA adapter. The XGA video technology supported a maximum resolution of 1024 × 768 with 256 colors and a 800 × 600 resolution with 65,536 colors.

Digital Video Interface (DVI) cable

Digital Video Interface (DVI)

DVI is a type of digital video technology that is different from the usual analog VGA technologies. With DVI connectors they look like a standard D-sub connector, but they are actually very different in pin configurations. Three main categories of the DVI connectors are DVI-A for analog-only signals, and DVI-D for digital-only signals, and also DVI-I for a combination of both analog and digital video signals. The DVI-D and DVI-I connectors come in also two types, a single link and dual link.

High Definition Multimedia Interface (HDMI) cable

High Definition Multimedia Interface (HDMI)

HDMI, made popular with the introduction of high end video consoles. Is an all-digital audio/video interface technology that gives very high resolution graphics and also digital audio with the same connector. HDMI provides an easy to use interface between compatible audio/video sources, like a DVD player, or games console. It will also work with an AV receiver and a digital audio video monitor or digital television (DTV). HDMI transfers uncompressed digital audio and video for the highest and crispest image quality. This is the reason why it has quite a large bulky cable.

S-Video

S-video or Separate-video is an analog video signal technology that carries its video signals as two separate signals. S-Video is sometimes known as Y/C video, where Y stands for a luminance (grayscale) signal and the C stands for chrominance (color). The connector for S-Video is a 4-pin mini-DIN connector that also has 1 pin each for Y and C signals, and 2 ground pins also. A 7-pin mini-DIN connector is also sometimes used. S-Video is not considered any good for high-resolution video display due to lack of bandwidth.

Composite Video

The Composite Video technology transmits on a single cable. This is different from the Component Video, which uses an analog video technology to split the video signals into red, green, and blue components. Component video uses 3 separate RCA cables for each color.