What are Algorithms and Big O Notation?

What is an Algorithm?

In computer science, programmers come up with software to accomplish specific tasks. When presented with a problem, a programmer will come up with a series of specific steps to solve the problem. This series of specific steps is called an algorithm. An algorithm will take in data, perform the steps programmed, and then give a result.

Outside of computer science, we learn and use algorithms every day. Imagine a parent tells a child, "Go wash your hands before dinner." The child will then go do the task. The child goes to a sink, then turns on the water, then wets his or her hands, then applies soap, then scrubs his or her hands, then rinses his or her hands, and finally turns the water off. This series of steps is an algorithm to accomplish the specific task of washing hands before dinner. The child was not born with this algorithm in his or her head. The parent had to teach the child how to wash his or her hands by showing the child each specific step. If the parent is too general with the instructions, the child might not do what the parent wants.

Using the same analogy, a programmer is like a parent and the computer is like the child. The programmer must teach the computer to accomplish specific tasks. The programmer does this by giving the computer a set of ordered instructions to follow. The programmer must be specific with each step. The computer will perform each step as a literal interpretation, and any generalizations will likely lead to an incorrect result.

Algorithms in computer science can accomplish simple tasks, or they can accomplish complex tasks. Computer science students usually start with simple algorithms that use very little data, such as sorting a small list of numbers in ascending order. The best and most experienced programmers implement algorithms that solve complex problems that use lots of data. An example is the Search Team at Google. They come up with algorithms that collect and index billions of web sites and they come up with algorithms to link search results to the most relevant sites.

What is Big O Notation?

As with many everyday tasks, there are a number of ways to solve problems. For example, some people like to wet their hands first before scrubbing with soap. This may be because their soap is a dry bar and requires water to lather. Other people might just apply the soap and start scrubbing, and only wet their hands when rinsing, because the soap is a liquid soap that does not need extra water to lather. Each of these methods would be a different series of steps, and thus are a different algorithm.

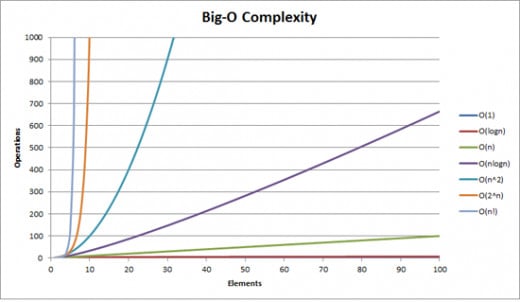

If there are different ways to accomplish the same task, how do we decide which way is better? Generally, we choose algorithms that provide an acceptable result in the shortest period of time. This is where Big O notation comes into play with computer science.

When telling five children to wash their hands before dinner, a parent notices that each child takes a slightly different amount of time to complete the task. Each child may have taken a slightly different amount of time, but performed the same number of steps. This is like different systems running the same algorithm. The data and the number of steps may be the same, but each computer will have different components which will result in a different running time.

If the same parent suddenly has five more children in the house waiting to eat dinner, and all ten children need to wash their hands, can the parent tell us the exact time it will take for each child to wash his or her hands? No, because each child takes a different amount of time to wash his or her hands, even when following the same steps. Each single child may also take a slightly different amount of time when comparing more than one hand washing session. Can the parent approximate how long it will take ten children to wash their hands? Yes, the parent can approximate. The parent can logically say it will take roughly ten times longer than one child washing his or her hands, or roughly twice as long as five children washing their hands.

Big O notation is a way of approximating how long an algorithm will take to complete all steps as the size of input increases. In the hand washing example, the algorithm was the task of washing hands, and the input was the number of children who needed to wash their hands. The parent supervising the children could not tell you exactly how long each child would need to complete the task, but can say that all ten children completing the task was roughly ten times longer than one child completing the task. If the parent is able to observe one child washing his or her hands as a base case, then the parent can easily approximate how much time it will take for the entire group to finish.

In mathematics, Big O notation is typically written as a function. For example, consider:

T(n) = O(n)

The "O" is the Big O notation part of the approximation. Here, O represents the complexity of the hand washing algorithm, T represents the approximate amount of time, and n represents the number of children. This is read, "T of n is the order of magnitude of n." What this means is that given the number of children n, the approximate amount of time for all children to finish washing their hands is relative to how long it takes one child to wash his or her hands. In other words, the amount of time for a group of n children to finish washing their hands increases by roughly the same amount for each child that needs to complete the task.

A key point to keep in mind with Big O notation is that it describes complexity between cases that are relative to the same algorithm. It is an approximation so that someone can use a reference point, typically a single case of the algorithm, to estimate time and complexity as more cases are added. Just because two different algorithms can have the same Big O notation does not mean that each algorithm will have anywhere near the same running time.

For example, let's say a parent tells one child to shovel snow from a large drive. After the child is done, the parent tells the child to help the neighbors on each side of their house by shoveling each neighbor's drive. The parent can use the time it took the child to shovel the first drive, and then approximate that it will take the child twice as long to finish shoveling both neighbor's drives. In this example, just like the hand washing example, T(n) = O(n). As long as the drives are the same size, the child will take roughly the same amount of time to shovel each drive. If the child finished, came home, and then was told to wash his hands before dinner, can we assume the child will spend the same amount of time washing his hands as he spent shoveling snow from the drive? The answer is no. The two tasks are very different, and even though they have the same order of magnitude, the base case takes different time to complete. The increase in time as more inputs are added increases on a linear basis with both tasks, though, which is what Big O notation tries to communicate.

Algorithms with Linear Complexity

- Computer Science: Kadane's Algorithm - Finding the Largest Continuous Sum in a List

You are presented with a list of unordered numbers. What is the largest sum in this list you can find by only adding contiguous numbers, meaning no gaps in the series?

Linear Complexity: O(n)

Linear complexity means that the time it takes to complete a task increases by roughly the same amount for each input to the algorithm. As previously discussed, hand washing is an example of a task with linear complexity. As the number of children that need to wash their hands increases, the time it takes for the entire group to finish increases at a linear rate, or roughly the same amount of time for each child.

In computer science, O(n) time complexity generally describes an algorithm that needs to scan a list of data items once. For example, given a list of numbers, what number is the maximum in the list?

Give the list: { 1, 2, 5, 9, 4, 3, 2, 2 }

The answer is 9. In order to make a determination, you have to look at each number in the list once. A computer would have to do the same. The computer scans each element, and if the element is greater than the current maximum, the current maximum becomes the new maximum. Once all the elements are scanned, the computer then knows the current maximum in the list. As the list grows in the number of elements it contains, the time it takes to find the maximum increases by roughly the same amount of time for each element that is added to the list.

Constant Complexity: O(1)

Constant complexity means that the task takes roughly the same time regardless of how many inputs are provided.

Imagine a teacher is given a number of students who are lined up single file. The teacher can see all the students. The teacher's task is to simply get the name of the first student in line. No matter how long that line is, the teacher only needs to ask the first student in line for their name, and the amount of time it would take to complete the task remains the same. This is constant time complexity. Since the teacher can see each student, the teacher knows the position of each student in line without having to count. We can have the teacher find the name of any student in the line by only giving the teacher the position of the student from whom the name is needed. Finding the name of any student in any position will always take the same amount of time.

In computer science, we can extend this analogy to random access memory. An array is a data structure that holds a number of data elements. If we have access to an array of numbers, we can ask the array for the value of the number in any one of its positions. What is the third element in the array?

Given the array: { 1, 5, 4, 7, 10, 9 }

The answer is 4. Each number in the array is like each student in the single file line. The array is stored in memory as a contiguous entity, meaning that we can randomly access any position in this array in constant time, just the like the teacher can ask a student in line for a name because the teacher can see all the students and immediately know each position each student has.

Quadratic Complexity: O(n^2) or O(n * n)

Quadratic complexity means the time taken to complete a task is multiplied by the time taken to complete the task once for each input added to the algorithm. It is an exponential growth rate.

For example, a teacher assigns all students to write up a short peer review of the book reports from all the other students, including a self review of their own book report. The teacher then has to read each peer review and grade each student on their book report. The amount of peer reviews and self reviews the teacher must read in order to determine a grade grows exponentially as more children are added to the class. When one child is in the class, the teacher must read 1 review. When two children are in the class, the teacher must read four reviews, or two reviews from each of two students. When four children are in the class, the teacher must read sixteen reviews, or four reviews from each of four students. Because the number of reviews grows exponentially in relation to the number of students, the teacher must be aware of the size of the class and likely reserve this method of grading only for very small classes.

In computer science, algorithms with quadratic complexity typically result from nested loops where each loop is dependent on the number of inputs. For example, given an array of numbers, provide how many other numbers in the array are larger than each number.

Given the array: { 5, 7, 3, 4, 2, 6, 1, 9, 10 }

This can be solved with an algorithm with quadratic complexity by comparing each number to every other number in the array. Start with the first element, which is five, and then compare it with each element from the first to the last. This would include a comparison to itself, which is fine for this example. There are four numbers larger than five, and they are seven, six, nine, and ten. There are nine elements in the array, and that means we will end up making 81 total comparisons. If we added a tenth element, we would need to make 100 total comparisons.

Logarithmic Complexity: O(log(n))

Logarithmic complexity means the time taken to complete a task increases with each input added to the algorithm, but the increase will be less than the time taken when adding the previous element.

For example, imagine a phone book is given to someone that holds 100 names on 10 pages, with 10 names per page. That person is asked to find a specific name. The person will open the phone book to a random page, and determine if the name is on that page, a page before, or a page after. This is an easy comparison because the names are in alphabetical order, and the person can easily see only the first and last name on the page. As each comparison is made, the number of pages that need to be looked at grows smaller and smaller until the name is found. As more names and pages are added to the phone book, the time is takes to find a name increases, but the increase is less than linear because it is possible to eliminate more pages from the early comparisons very easily.

Let's say the person is tasked to find the name of "Betty Crockerm" which is on page two. The person opens the phone book to page five, looks at the first and last names, and decides Betty will be on a page before page five. Pages five through ten are eliminated from the search. Pages one through four are left. The person then opens to page two and looks at the first and last name and determines Betty is on a page after page two. Pages one and two are eliminated from the search and pages three and four are left. The person then opens to page three, determines Betty has to be on this page, and finds her name.

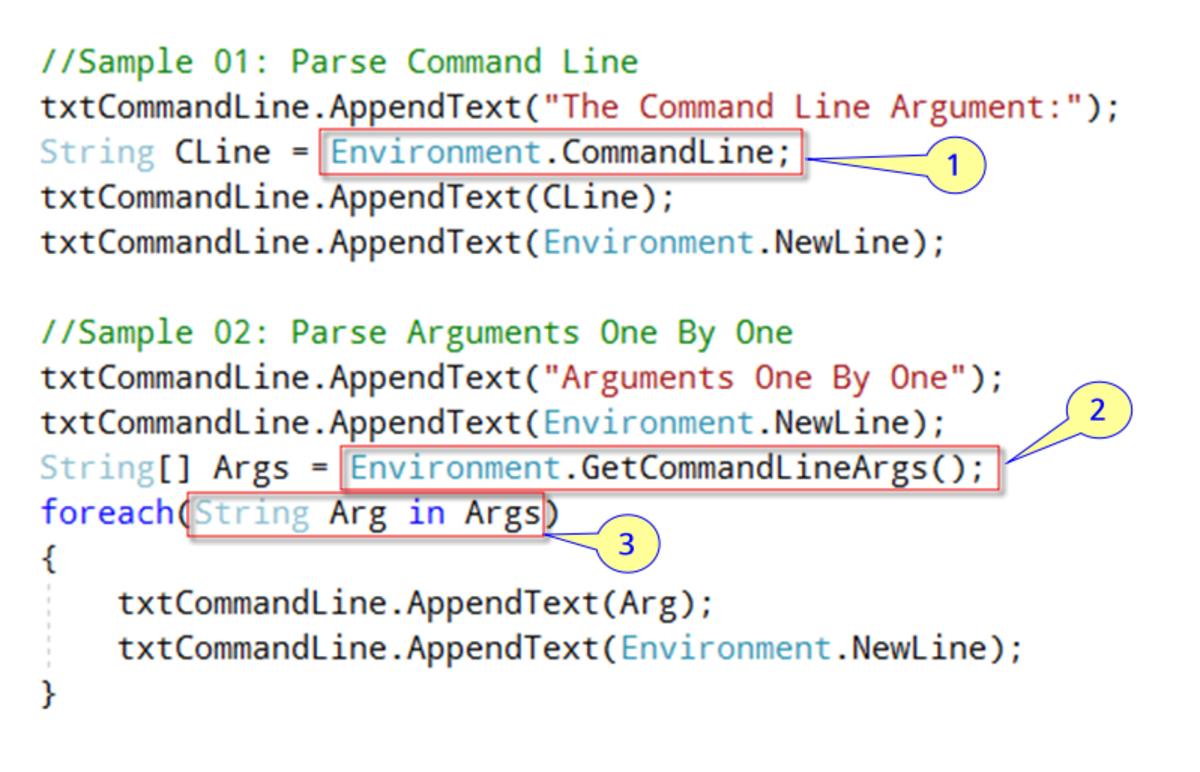

The example of looking through a phone book is what is known as a binary search. A binary search will take a collection of elements and do a comparison with the middle of the collection. The collection is required to be sorted on some metric. Roughly half of the elements in the search can be eliminated with each comparison of a binary search. If using a sorted array in a computer program, a search can be performed by giving a starting point for the search and and ending point, and then eliminating half of the array by changing the starting point or ending point based on a comparison with the middle element of the original starting point and ending point.

Linear Logarithmic Complexity: O(n log(n))

Linear logarithmic complexity is a combination of linear complexity and logarithmic complexity. It typically deals with a task that would normally be be logarithmic complexity, but an added twist has made sure that task has to be done in a linear number of times.

For example, searching for a person's name in an phone book is a logarithmic complexity task. The task is based on how many names are listed in the book. Instead of searching for a single name, though, we tell the person to search for a number of names. The task now depends on two variables. How many names are in the phone book, and how many names must be looked up? Searching for a single name is a logarithmic complexity task. Searching for a number of names is a linear logarithmic complexity task.

In computer science, a popular sorting algorithm is Quicksort. Quicksort takes an unordered list of data, and then starts dividing that list up into smaller and smaller portions, sorting the middle element for each one. On average, Quicksort runs with a linear logarithmic complexity.

Factorial Complexity: O(n!)

Factorial complexity will require large number of computations for small numbers of inputs. It is very inefficient and should be avoided at all costs.

Imagine a salesman has to travel to ten different cities. What is the best path for the salesman to take? The best path would be one that allows him to travel to all ten cities in the shortest distance traveled. One way to solve this problem would be to make a list of all the possible travel routes and compare the distance traveled for all routes. There are ten cities to choose to start in. After a starting city is chosen, the second city will have 9 options. The third city will have eight options. This will continue until the last city is chosen, which will have one option left.

Number of routes: 10 * 9 * 8 * 7 * 6 * 5 * 4 * 3 * 2 * 1 (or 10!) = 3,628,800 routes

With only ten cities, the number of routes is already unmanageable for a normal person, and grossly inefficient for a computer.

Exponential Complexity: O(2^n)

Exponential complexity means the time to complete an algorithm will double for each element added as an input. An algorithm with this complexity is to be avoided at all costs.

Using the example of the traveling salesman again, we can show exponential complexity. Instead of making a list of every route between 10 cities, we instead change the approach to use a heuristic. A heuristic is an educated guess about the value of the next move. The salesman decides that whatever city he starts in, he will rule out the obviously bad choices and make a smaller list. For each stage of the trip, depending on the starting city, he can pare down the choice for the next city to two cities on average.

Number of trips: 2 * 2 * 2 * 2 * 2 * 2 * 2 * 2 * 2 * 2 (or 2 ^ 10) = 1,024

The number of routes is probably still unmanageable for a human, and still grossly inefficient for a computer, but is far better than the previous approach to the problem.

Other Complexities

There are a number of advanced complexities seen with Big O notation that are related to the common types discussed above. This general overview will add more examples as they come up in the Computer Science hub series. Be sure to check back often for updates.