High Performance Computing in Data Centers

What is High Performance Computing

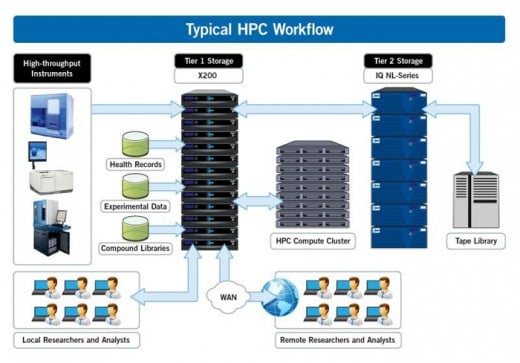

According to an article on InsideHPC.com, “High Performance Computing (HPC) most generally refers to the practice of aggregating computing power in a way that delivers much higher performance than one could get out of a typical desktop computer or workstation in order to solve large problems in science, engineering, or business.” Thus, HPC is basically about using the power of multiple computer processors in order to solve complex problems. HPC has typically been the preserve of research institutes, engineers, government, military, and technology giants, but now its use is spreading to businesses as the technology used by such businesses grows more advanced. Data center providers are seeing a new business opportunity, as they can provide not just data storage, but also data analysis as an input into strategic business planning for their clients using HPC. According to an article on IT Pro Portal, the market for HPC is likely to grow from USD 28.1 billion in 2015 to USD 36.6 billion in 2020.

Latest Technology Used for HPC

Traditional CPUs are not able to meet the demands of the HPC industry. Here is where the Graphics Processing Unit, or GPU, comes into the picture. According to an article on searchvirtualdesktop.techtarget.com, “A GPU is a computer chip that performs rapid mathematical calculations, primarily for the purpose of rendering images.” The GPU has a parallel processing architecture in the form of hundreds of thousands of smaller cores. Compare this to the four to eighteen cores that a traditional CPU has. GPU computing is the use of the GPU cores as a co-processor to accelerate the applications running on the CPU of the computer. Another way to look at it is this: the few cores of the CPU enable sequential serial processing, while the multiple cores of the GPU can handle multiple tasks simultaneously, via parallel processing. While Nvidia has been the pioneer in building GPUs, Velocity Micro uses Tesla GPUs to build top-of-the-line HPC workstations.

Data Centers are Gearing up for the Challenge of Demand for HPC

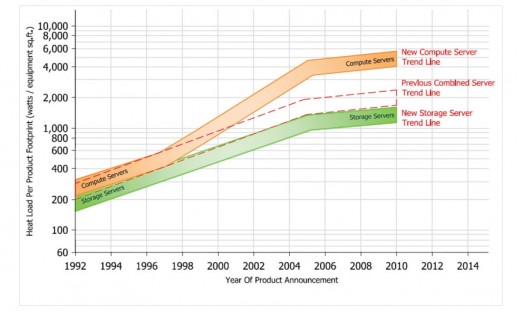

The growth in HPC requirements is being driven by consumer and enterprise technologies such as the Internet of Things, virtual reality and big data. Data centers will need to invest in more space for HPCs. However, space is at a premium, especially in big cities. Firms may decide to open a new site where they can install a suitable infrastructure in place to meet the needs of HPC; this will be a significant investment, and firms will need to make sure that they understand the current and future demand dynamics of HPC.

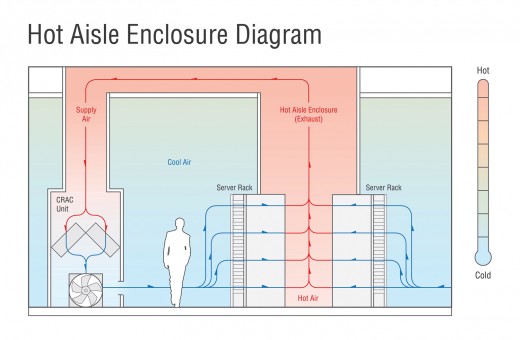

Data Centers will need Adequate Cooling of their Facilities

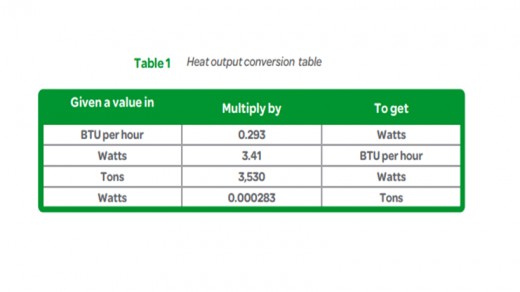

High performance computers generate significant amounts of heat, so heat management is crucial. According to a white paper by Schneider Electric on Calculating Total Cooling Requirements for Data Centers says, “For larger data centers, the cooling requirements alone are typically not sufficient to select an air conditioner. Typically, the effects of other heat sources such as walls and roof, along with recirculation, are significant and must be examined for a particular installation.”

Typical Cooling Solutions

According to an article on Data Center Cooling Technologies, published in the Journal of the Uptime Institute, the ASHRAE Technical Committee TC9.9 guidelines recommend temperatures between 18°C and 27°C for the device inlet and relative humidity (RH) of 20%-80% to meet the manufacturer’s criteria. Some typical methods of cooling tried by HPC providers include liquid cooling, larger fans, or conductive cooling methods. However, the rate at which the computers produce heat is higher than the rate at which the cooling fans and other such cooling methods can dissipate the heat. Some data centres are therefore setting up their sites in the cold weather climates of countries such as Iceland; this enables data centers to be cooled naturally by the outside air.

Data Centers Must Build Adequate Power Infrastructure

Data Centers wanting to install HPCs need to account for the provision of high density power, and the impact this will have on cooling requirements. The data center will need to install backup power facilities as well; all of these requirements will require significant capital expenditure by the firm operating the data center. Data center providers need to understand their market and the likely future demands, so that they can plan judiciously and invest suitably in required infrastructure.