What Makes the Convey Supercomputers Unique?

The convey represents a new generation of supercomputers because it harbors the ability to process and warehouse data in a smaller, less expensive, easier to use, and faster manner. Another feature in the convey is that different “personalities “can be programmed on its hardware. It also incorporates base instruction in its system. Further, the development of the Convey has represented a reduction of 84% in power cooling and 83% in the quantity of floor space utilized. This is a representation of the new general of supercomputers.

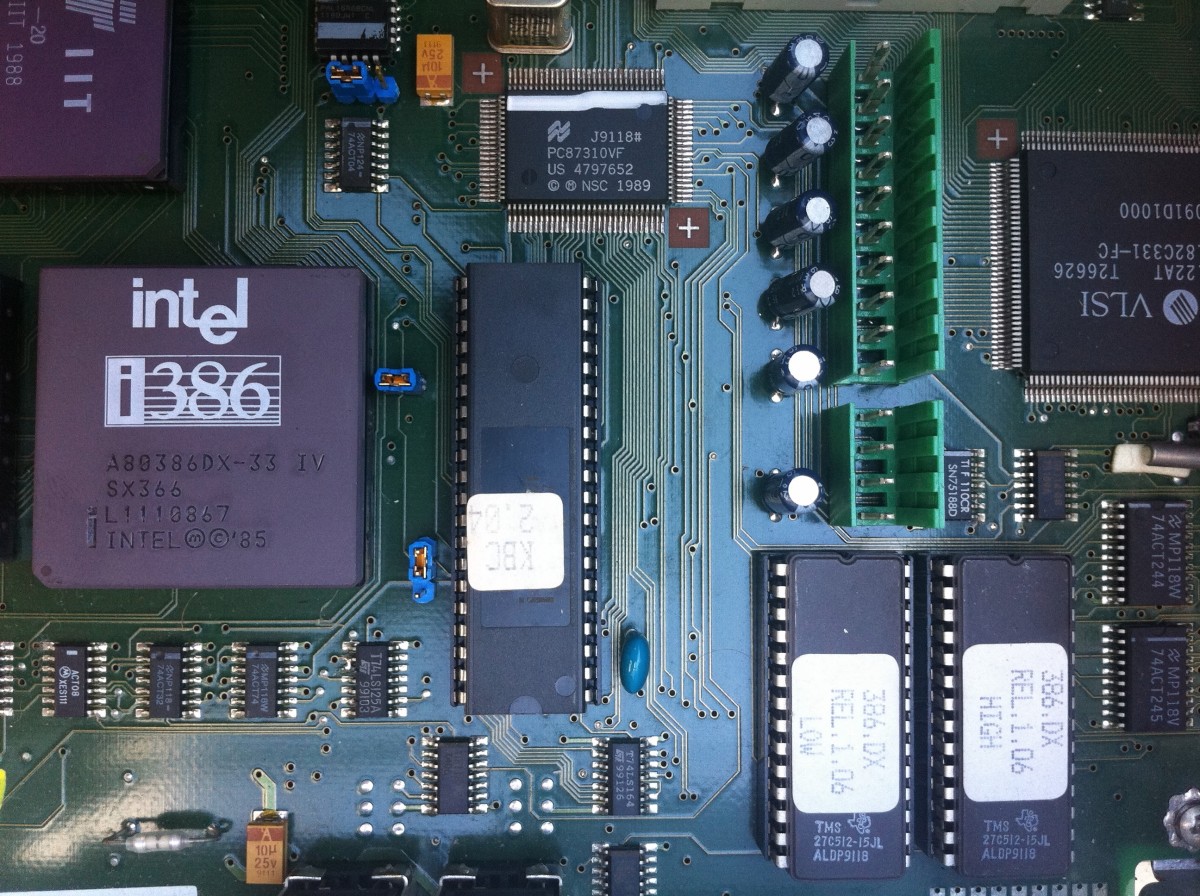

The term "personalities" on the convey computer is used in reference to the list of instructions that enable the computer to undertake its functions. This set of instructions or personalities is programmed to the convey hardware device. The convey supercomputer was designed majorly for engineering and scientific applications. The convey computer is based upon Intel’s microprocessors. Its performance is like a shapeshifter reconfiguring with various hardware “personalities” in computing problems for various industries. At first, the Convey computer was aimed at bioinformatics, gas and oil design, computer-aided design, and financial services. Another essential quality of the Convey computer is that it is a green computer. This aspect will assist in quelling the tension of the I.T industry becoming a boogeyman for global warming.

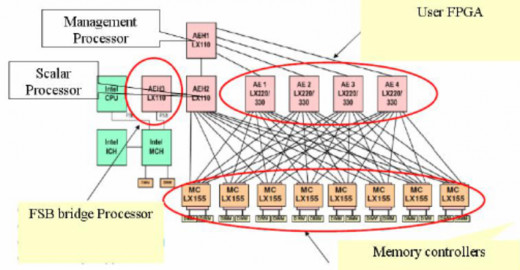

The development of the Convey computer is deemed to significantly increase data generation in life and engineering services. It may require similar computational developments and advances for analysis. This computer will speed analysis with a hybrid core technology, which is a computing architecture that is highly advanced and combines a conventional environment with reconfigurable micro and co-processors. According to Markoff (2008), the convey computer system is deemed to reduce the run time in application and be capable of addressing issues that may be unapproachable with modern computer systems. Unlike other computers and supercomputers, the Convey one offers higher performance, with less cooling and space. It will help in improving work by speeding up work, enabling better and more science, and decreasing operation and ownership cost. It should be considered that the convey is among the low-cost supercomputers in the market today.

In coming up with the Convey computer, designers were concerned about big data and high-performance challenges. Other concerns were the high cost of energy, space constraints, and environmental problems. The designers are of the opinion that the Convey will address these issues accordingly. The convey computer could be many times efficient in times of energy, reducing floor space in a dramatic way as well as the cooling requirements. In addition to these, the Convey is much faster in application and cost-efficient in relation to other supercomputers.

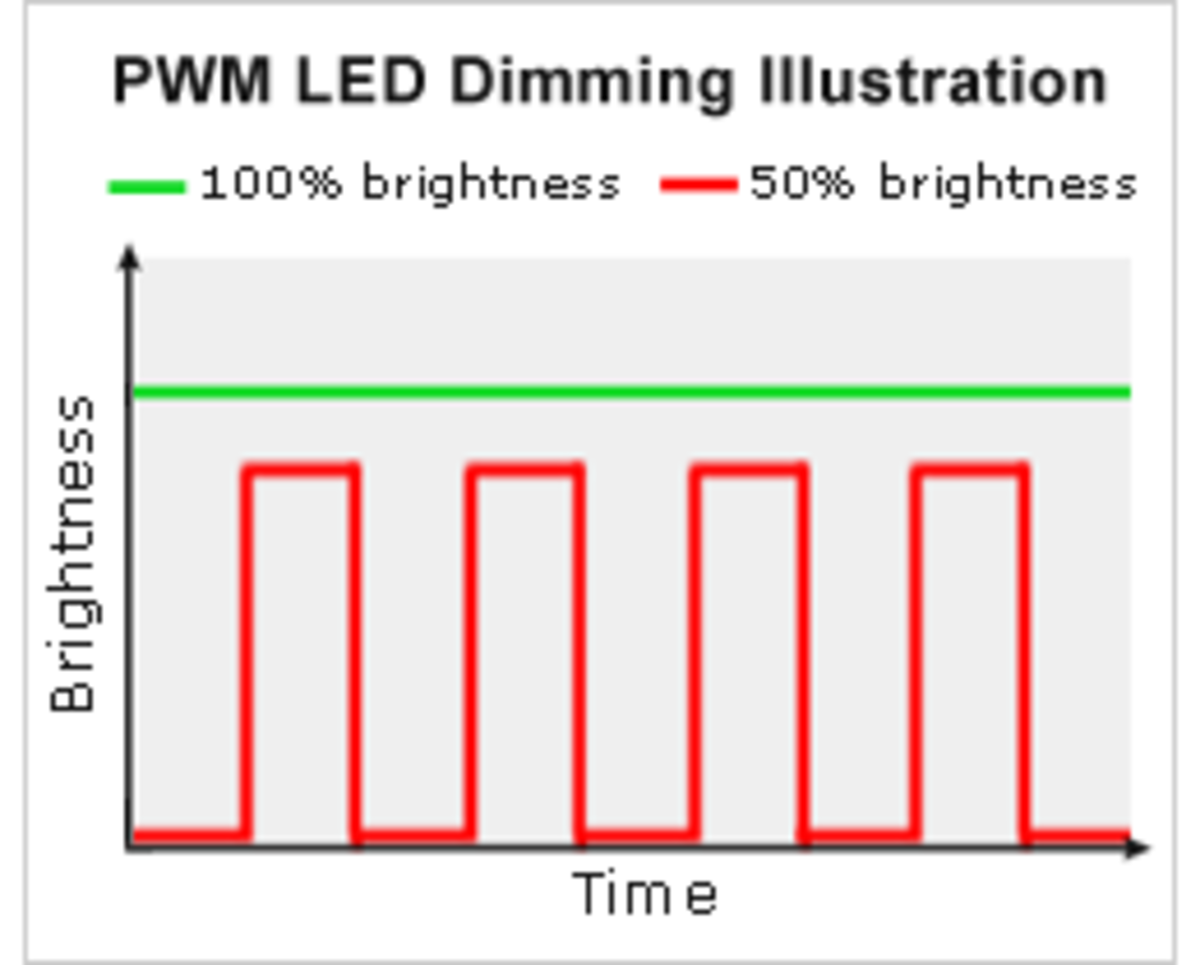

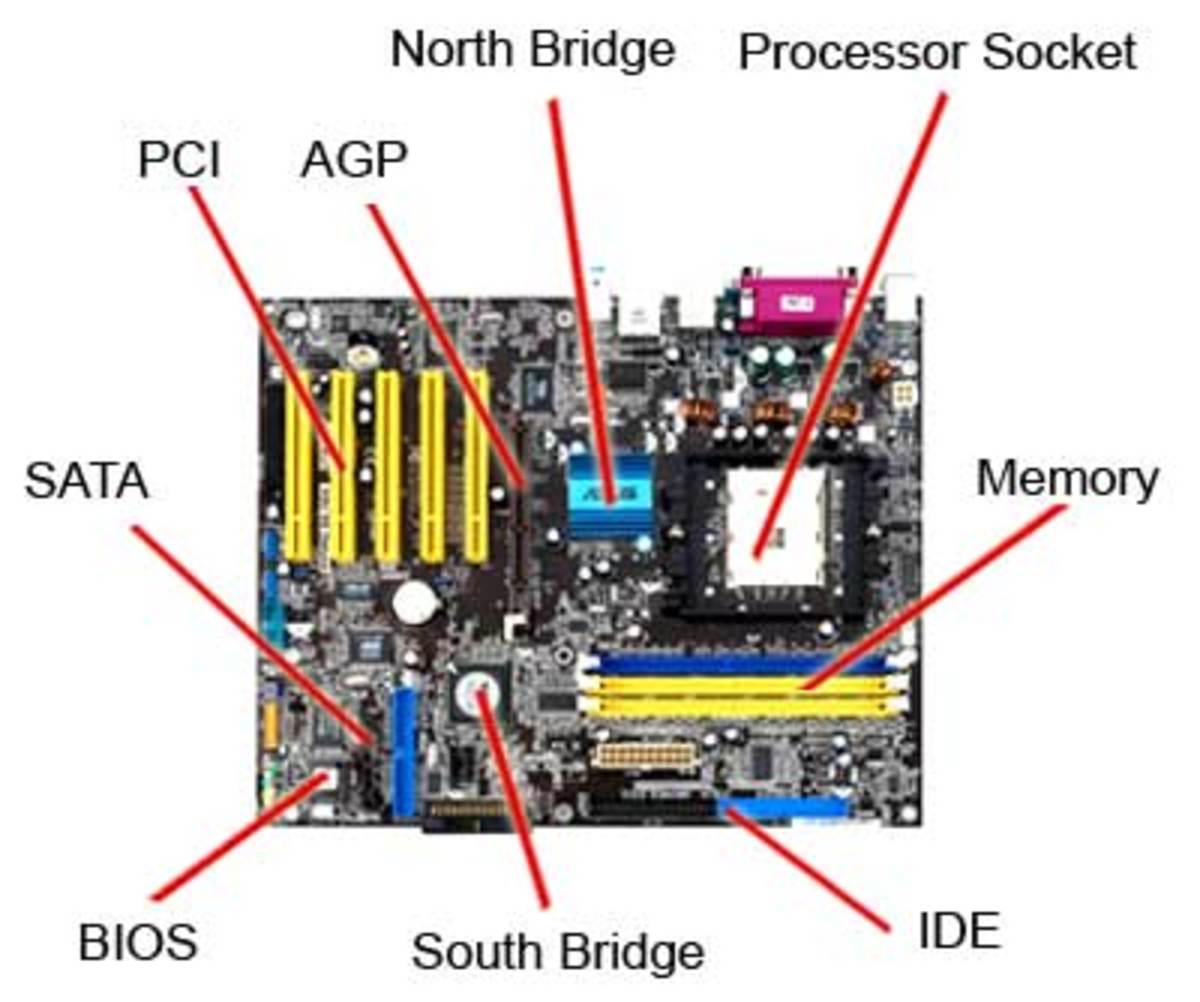

Supercomputers are computers, which are assembled from a large amount of microprocessors. There use of electricity is much higher in comparison to the normal computer. These computers may at times be difficult to program. Majority of the novel supercomputers try to address the challenges of solving various categories of issues through connecting various types of professors in a Lego Style. Ding, and Fensel (2011), explains that the high quantity of processors normally takes two ways: In one approach, such as in the case of distributed computing, a high number of discrete computers such as laptops that are distributed across the network such as the internet dedicate some of most of their time in addressing common issues. Every particular computer or client receives and completes many small tasks, and reports the outcome to a central server, which then integrates the results of the task from its clients in addressing the issue. In one spectrum, a high number of devoted computers are placed in close proximity to each other such as in a computer cluster. This greatly assists in saving a considerable amount of time in moving data and information around and make it easy for the processors to work in unity instead of performing separate tasks. An example can be derived from the hypercube architectures and the mesh.