- HubPages»

- Technology»

- Computers & Software»

- Computer Science & Programming

Using HDFS, MapReduce, Python, Pyspark and Eclipse together with a hint of IPython

The work done here was carried out on a dual MacBookPro on which I had installed a YARN HDFS single node cluster.

Hadoop is traditionally complex and hard to program. After some ten years of development simpler tools based on MapReduce are coming to the fore. One such tool is Apache Spark, a fast and general engine for large scale data processing which claims to be able to run Map Reduce tasks 100 times faster than Hadoop using in memory processing, and 10 times faster on disk.

Spark lets developers write applications in Java, Scala or Python and includes an interactive shell for each language. It powers a number of high level tools (out of scope here), can run on an Apache Yarn 2 cluster manager and can read data in an HDFS file system.

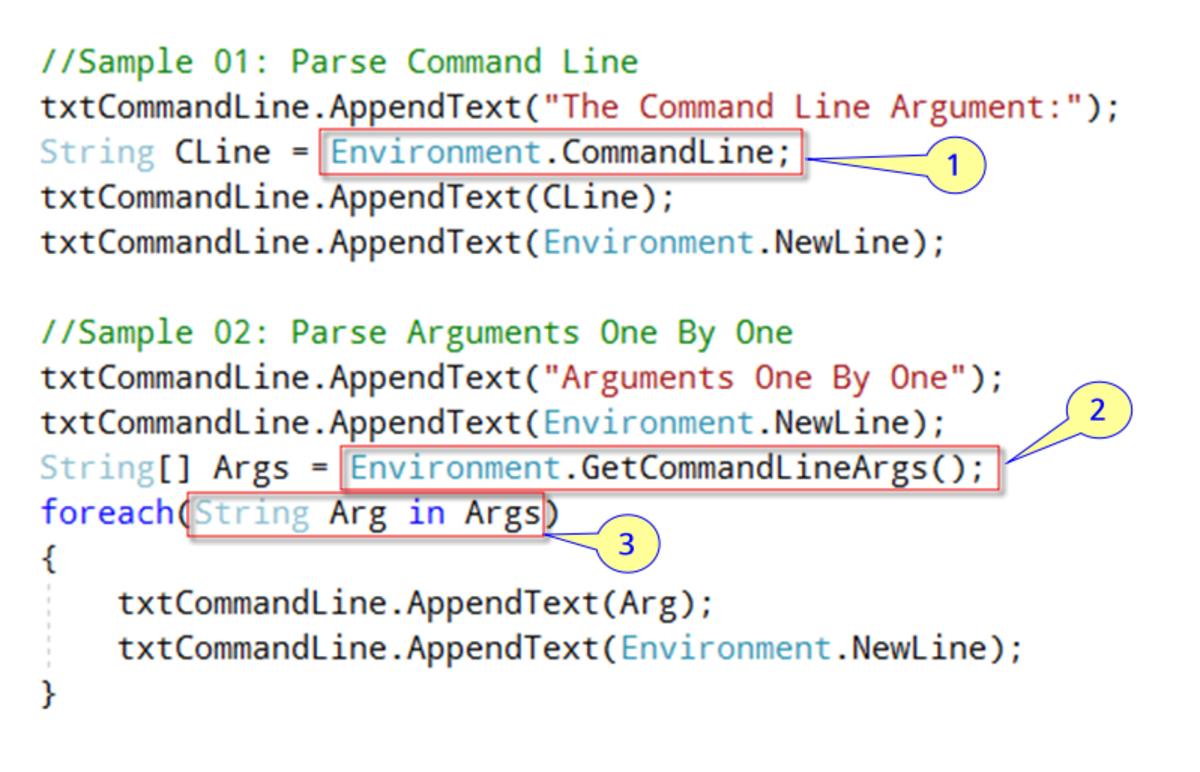

The Eclipse Pydev plugin allows users to develop Python applications from Eclipse, though it can seem temperamental to beginners and is less polished than Eclipse for Java. Pydev is installed by default in Aptana Studio, an Eclipse based IDE for web development.

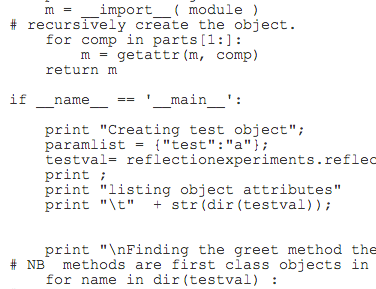

Ipython (Interactive Python) is Python Distribution that makes interactive computing easier. One important feature of Ipython is the notebook, which allows snippets of code to be executed interactively from a web browser. It also allows execution of UNIX statements by creating a local bash shell inside the noteboook. Ipython can be difficult to install and the easiest way to install it is using the free open source distribution Anaconda

The goal was to set up an environment to allow writing of pyspark code in Eclipse. Since Pyspark requires submitting python code to a shell script that runs the code, running directly from eclipse would not be straightforward and it was unclear whether all available processors would be used if running from within Eclipse [5], so it was decided to run from the command line or an Ipython notebook. The possibility of running Ipython from Eclipse was deferred.

Pyspark

Pyspark, the python library for Spark programming was simple to install but less simple to run. A special script, spark-submit, is needed to run python code from the command line. To make this more flexible a shell script was written to hide these problems

After unpacking the spark download into a Directory $SPARK and defining

PYSPARK_HOME = $SPARK/ spark-1.0.2-bin-hadoop2/bin

running a pyspark program reduces to

$PYSPARK_HOME/spark-submit --master local[<n>] $*

where n is the number of cores on which to run a job ( the number of cores on your machine if running locally) and the first argument is the program to run.

You can add 1>pyspark.log 2> pyspark.err to the run command to keep the window as clean as possible.

Pyspark allows files to be written to or read from a hadoop filesystem or to a local filesystem. To access a hadoop filesystem needs a hadoop url of the form

hdfs://localhost:9000/user/filename.txt

The port depends on how your filesystem is set up, normally it will be 9000

Eclipse and Pyspark

Since Pyspark code must be submitted via a shell script running and debugging inside Eclipse may be possible but the reward may not justify the effort.

It is possible to get some benefits from Eclipse using Pydev and adding the python directory of your pyspark installation $PYSPARK_HOME/python, to your PYTHONPATH. This allows code completion and the familiar Eclipse red crosses and warning yellow triangles.

Opening a command shell in the folder where the code is stored lets it be run outside Eclipse

To start with eclipse import one of the examples in the python library into eclipse, add a couple of comments or start playing round with it then run the python file from the command line using the shellscript above.

The downside of running like this is becoming overdependent on the history command or writing loads of scripts, many of which will only be needed for a short while. But there is a way round this.

IPython

Anyone who has tried to develop GUI in Python knows that the language was not designed for this. IPython tries to get round this by providing an interactive kernel and shell allowing interactive computing. It supports visualisation and GUI toolkits and tools for parallel computing. As the name implies it is focussed on the Python language so it is a potential useful adjunct to Pyspark. As with any other technology it takes time to master but it seems it will be worth the effort.

IPython has interesting features for parallel computing and data visualisation but the one of interest here is the IPython notebook, an interactive browser based notebook that allows you to store and run useful snippets of code. Worthwhile publications and even academic publications have used Ipython though the format of these makes them harder to read, at least without practice, than conventional publications.

Using Ipython, with or without a notebook, could allow rapid data analysis with Pyspark and insight harvesting with Ipython. This part of my project is still in its infancy.

The Anaconda IPython distribution was used since this avoided problems with installing it in raw form. Anaconda provides a launcher which offers the user a choice of an IDE, a console or the Ipython Notebook. Once Anaconda is installed ( a very simple process for OS X) just cd to the directory where you want Ipython to look for your notebooks (ideally a fresh empty directory)and type

ipython notebook

Allow 60 seconds or so for the server to start. The server will show a list of files in the directory including any notebooks, which will all have a .pynb suffix

The notebook is divided into cells. There are two types, code cells and Markdown cells. Markdown cells are for text and code cells are to run code, show pictures and rich media and other fun interactive things.

The Wrap

Pyspark makes Hadoop/Mapreduce programming easier. Eclipse with Pydev makes Python Programming easier. Ipython makes running Pyspark a little easier and and allows snippets of code and bash scripts to be preserved and run from notebooks, where the actions can be documented. While this process is a little cumbersome it beats the alternatives.

References

-

Apache Spark https://spark.apache.org/

-

PYDEV http://pydev.org/

-

Ipython http://ipython.org/

-

My understanding is that with Hadoop code run from Eclipse is in single processor mode.

-

http://www.randalolson.com/2012/05/12/a-short-demo-on-how-to-use-ipython-notebook-as-a-research-notebook/ Includes a useful video demo that soerves as a tutorial