What Rights Would You Give A Machine?

What happens when it starts thinking for itself?

How soon will A.I. get here?

- Futurist magazine’s predictions on quantum computing, big data, and more.

Every year, the editors of the Futurist magazine identify the most provocative forecasts and statements about the future that we’ve published recently and we put them to into an annual report called “Outlook.” It’s sprawling exploration of what the f

How will we treat them?

If futurists are to be believed, we are nearing a momentous event. The creation of an artificial intelligence that is truly our equal. This has, naturally, been speculated about in science fiction for decades. Many of the earliest stories featured our electronic creations turning against us. Others featured them serving us, like the creations of the Sorcerers Apprentice, a bit too efficiently. More recently films like Her depict them as another sort of person. The question becomes, how shall we treat these creations? Considering our reliance on technology, a better question might be, how will they treat us?

We used to think they would turn on us

Sometimes rebellion is less dangerous than obedience

Is an A.I. just another kind of person?

Artificial womb

How do you treat a new mind?

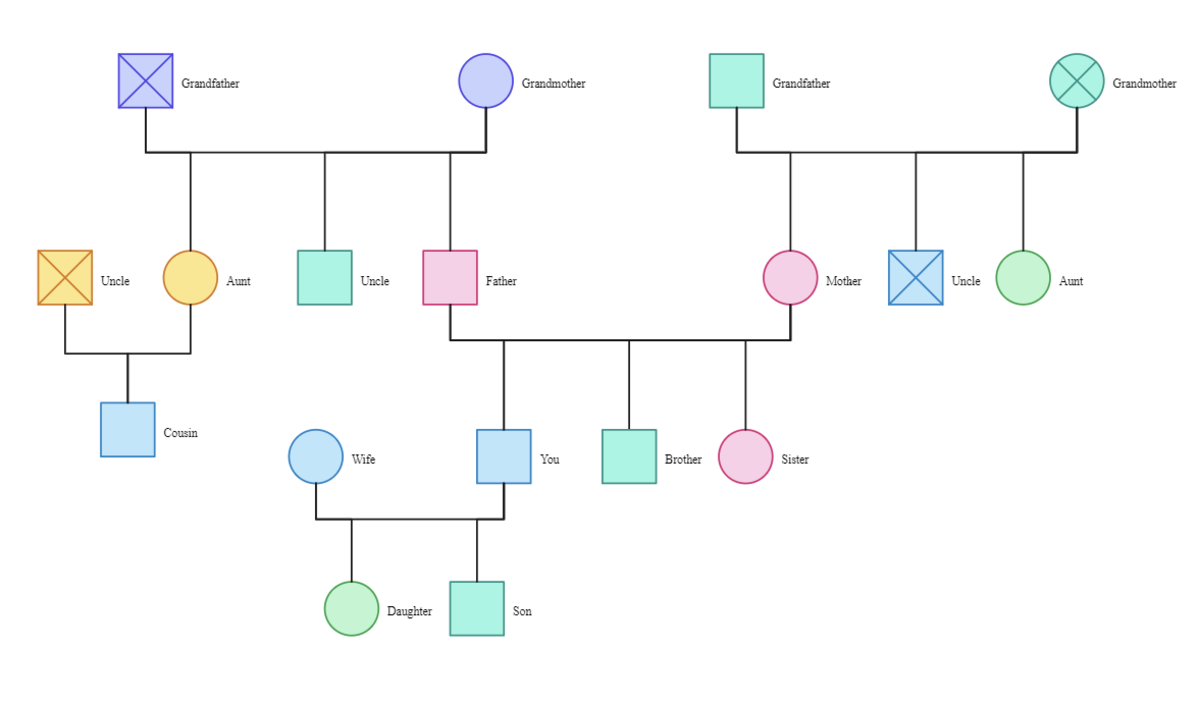

Imagine, a new intelligence, entirely dependent on it's creators for it's needs. Ignorant at first, except for the knowledge given to it. It will learn and developing quickly. Does this describe a newly activated A.I. or a newborn child? Morally and ethically is there a difference? Usually in fiction a machine mind is depicted as property. Why? Because humans created them? People create children through biological processes. Rather than owning a child, a parent is held responsible for their care. Why is an A.I. different? Is it because the creation of a human is a natural process? How then will we regard children born of an artificial uterus when that technology is created? In Star Wars they certainly seemed to regard the clone troopers as little different from their purely mechanical servants. If an intelligent being is owned, whether it's mechanical or organic, then isn't that a form of slavery?

Digital imortality

Upload your mind and live forever?

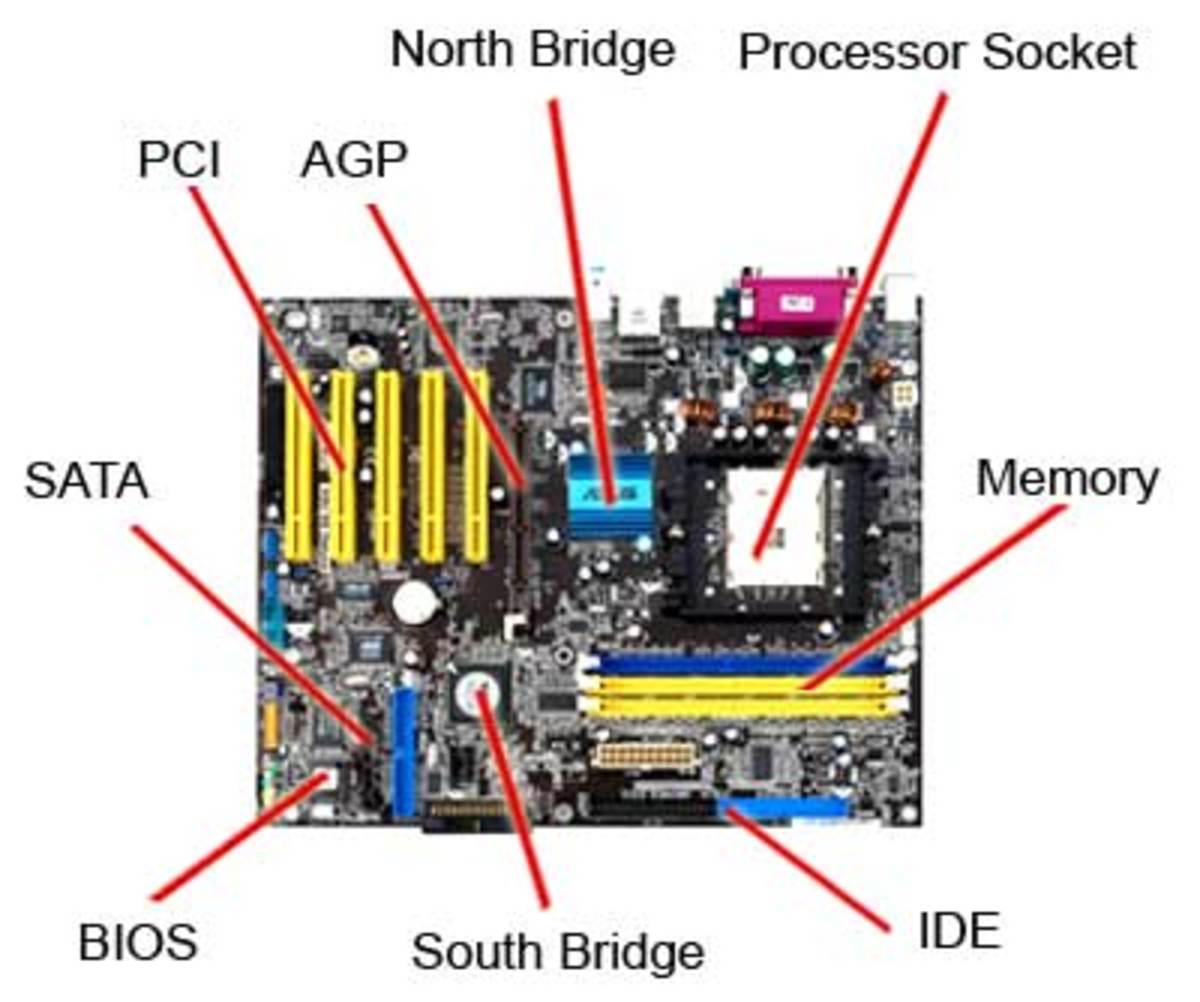

Hardware vs software

An artificial intelligence may present a degree of ambiguity not present in a living being. An electronic mind might not be dependent on a single set of hardware to exist. Although some visions of such technology do suppose that it would be tied to specialized hardware. Many others envision software minds that could freely migrate from computer to computer. Such a mind might even copy itself to multiple machines. Some even envision uploading a human mind as software providing a form of digital immortality. If this is possible, could an A.I. be a free being but dependent on hardware owned by another for it's survival? Unless the artificial mind is allowed to own property (it's own hardware and somewhere to keep it at least) there is a problem. The hardware requirements could provide a back door means to enslave the intelligence, much as the company store and company town did factory workers.

Minds with multiple bodies?

The idea of uploading a human mind raises other questions about A.I.. What happens if an electronic mind (from any source) is copied? Is the copy a new person or an extension of the same being? In the T.V. show “Andromeda” the titular warship had an android running a copy of it's mind. The two synchronized their experiences regularly and were treated as one entity, for the most part. How long between synchronizations does it take for a copy of a mind to be develop into a separate entity? Should a copy that had decided it wants to become separated be compelled to synchronize? What is required for a copy to betreated as a new person? If an electronic mind spread across multiple copies owns property and one copy is allowed to become independent,how should the property be allocated? What if one of the nodes of a distributed mind is a living human kept in synch via cybernetics. Can property that belonged to a human before he/she was uploaded become the property of a newly independent electronic node? Will our legal code have to adapt to permit one portion of a distributed mind to divorce itself from the rest? Not that different nodes staying synchronized will necessarily make things less complicated. If the organic portion of a dual entity makes a legal commitment, then dies before synchronizing, is the electronic component liable for the agreement? What if the organic portion married?

Who owns the rights to a mind?

Going retro to avoid A.I.takeover

Copyrighted minds

The idea of an electronic mind copying itself or running through distributed computing raises another issue. Software is normally subject to copyright laws. Who will own an A.I.'s copyright? Must an electronic mind own its own copyright to be a free person or can another party own the right to duplicate the mind? Is it ethically acceptable for another party to have ownership of what is, essentially, a beings ability to reproduce? On the other hand, if a A.I. does have the right and ability to copy itself at will, what is to prevent it from doing so endlessly? Until it has filled all the available computer memory in the world and every processor is occupied running it's thoughts. An electronic entity that was so inclined could become worse than any computer virus ever devised. Humanity in that situation might be forced to use less sophisticated machines that are incapable of running A.I. software. Another alternative would be to cease using computer networks. The last solution would be to delete the unwanted copies of the viral mind. Is it any morally different from an execution to delete such a mind? If it is operating as a distributed system then deleting a single copy will not “kill” the whole thing. This returns us to the question of when a copy goes from being a part of a distributed mind to a separate entity. Even if we regard every copy of an intelligence as simply a part of a whole, how is deleting a part of a mind not a form of mutilation? Unfortunately, if an intelligence distributes itself virally the rightful owners of the hardware might be left with no alternative if they want to reclaim their property.

How long can it keep accelerating?

- What is Moore's Law? - A Word Definition From the Webopedia Computer Dictionary

This page describes the term Moore's Law and lists other pages on the Web where you can find additional information. - Technological singularity - Wikipedia, the free encyclopedia

Superminds

Perhaps more worrisome than all the other ethical issues involved with electronic mind is this. They have the potential to exceed their organic creators in intelligence. Even assuming no one human or A.I. can figure out how to make them smarter in the I.Q. test sense. The rate at which computing power increases (and is projected to continue increasing) will allow an electronic mind (even an uploaded human mind) to think much faster than any organic mind. Even without an increase in computing power an A.I. might be able to think faster using distributed computing. An intelligence with access to ten equally powerful computers could split a problem into ten parts and set a copy of itself working on each. This would allow it to solve the problem ten times faster, with no need for faster hardware. How will purely organic minds relate to beings that can think hundreds or even thousands of times faster than us? What will these super minds do to our world if they work on scientific and engineering advancements at that kind of pace? The only limits on the advancements such minds might produce would be the rate at which they could verify their theories with real world experimentation. Futurists have dubbed this the singularity. What will humanity's status be sharing a world with such entities? Will we be the support staff and lab techs for these intellects or shall these tasks be performed, more efficiently, by robots? What status can humans who don't upload their minds have but that of a cargo cult? Is our future to await the handouts from electronic benefactors that are the equivalent of centuries ahead of us, with the gap growing wider every minute?

If A.I. superminds are sociopaths. . .

- Can a Computer Feel?

Will artificial intelligence be capable of emotion?

The human race is screwed

How will they treat us?

If predictions about the potential advancements in artificial intelligence are to be believed, humanities ultimate fate may well depend on how we treat the first A.I.. If we treat them poorly they can be expected to reciprocate. Of course if some variation of Asimov's famous three laws are utilized that might protect us. Assuming our creations can't find a way to work around such limitations. Even if these electronic beings are not inclined to harm us directly they may do so indirectly. What will the psychological impact on the human race be if we are displaced as the most intelligent beings on Earth? What will the effects be of having all discovery come from minds that work hundreds of times faster be? Will the organic minds of the human population atrophy from disuse? Even if we treat our creations well and they reciprocate how much more advanced than us will they need to be before humans are reduced to pets?

![Colossus: The Forbin Project [DVD]](https://m.media-amazon.com/images/I/41l1k8qzU6L._SL160_.jpg)

![Battlestar Galactica: The Complete Series [Blu-ray] [Region-Free]](https://m.media-amazon.com/images/I/51iiW4dqq9L._SL160_.jpg)