Glossary of Terms used in Digital TV Technology

Bandwidth

The amount of information that can be transmitted at a given time is known as bandwidth. You need a high bandwidth to display images with sharp detail and therefore it is a quality factor for the images transmitted or recorded. ITU-R 601 and SMPTE RP 125 is allocated bandwidth for the analog signal of 5.5 MHz luminance and chrominance of 2.75 MHz, the highest quality achievable in a standard broadcast format.

Digital imaging systems tend to require large bandwidths and hence the reason why many storage and transmission systems are required to use compression techniques to adapt the signal thus reducing the bandwidth.

A/D or ADC

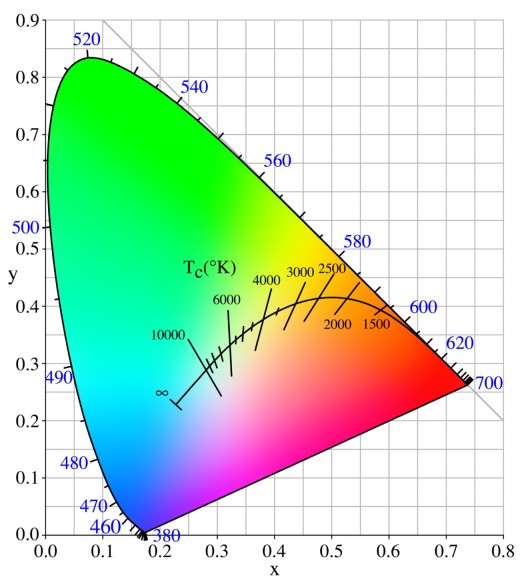

Analog to Digital conversion. It is also called digitization or quantization. It consists of the conversion of analog to digital signals, typically for later use in digital equipment. In TV, which shows the audio and video signals, the accuracy of the process depends both on the sampling frequency and the resolution to quantify the analog signal, i.e., how many bits are used to define the analog levels. For TV images are often used 8 or 10 bits, for sound, normal is 16 or 20 bits. Recommendation ITU-R 601 defines the sampling of video components based on 13.5 Mhz and AES / EBU determines a sampling of 44.1 and 48 kHz for audio.

For images, samples are called pixels, each containing some information of brightness and color.

Concatenation

Shackle (systems). Although the resultant effect on quality by passing a signal through multiple systems has always been a concern, use of a chain of compressed digital video systems is not so far well known. The matter is complicated because virtually all digital compression systems differ in some respect of others, therefore, there is a need to be careful with the concatenation. In broadcast, the current analog compression systems operate PAL and NTSC, increasingly, along with MPEG digital compression systems used for transmission. The only way to be reasonably sure of getting the best results is to avoid using compression on the signal post and maintain full ITU-R 601.

Dither (Oscillation)

In digital television, the original analog images are converted into digits: A continuous range of luminance and chrominance values are translated into a set of digits. While some analog values will correspond exactly with those digits, others will fall, inevitably, in the middle. Since there is always some degree of noise in the original analog signal, there may be "dither" in the digits in the least significant bit (LSB) between the two nearest values. This has the advantage of allowing the digital system that reflects the analog values between LSBs to provide a very accurate digital representation of the analog world.

If the image is generated by a computer, or as a result of digital processing, the "dither" may not exist - resulting in effects of ‘convoluted'. Using Dynamic Rounding can add "dither" to the images for a more accurate result.

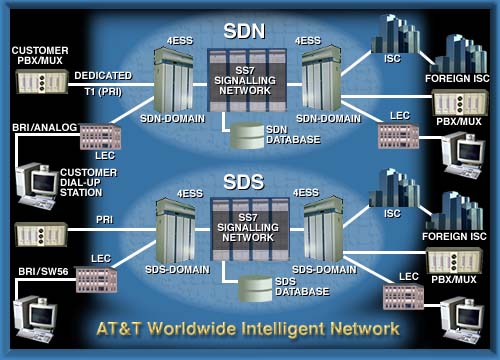

Digital Transmission

TV broadcasts in the future take digital pictures and sound into our homes. Using digital compression can transmit several TV channels in the bandwidth of one analog channel, making possible to receive more and producing greater clarity of image and sound. The HDTV is transmitted digitally using MPEG-2 compression.

Cable companies and satellite broadcasters can use technology to offer more channels. But you pay a price: In general the higher the number of channels, higher compression and lower quality images, but in general, you can transmit four digital channels into the bandwidth of an analog channel. Services like video on demand (VOD) can use higher compression so that any selected movie may be available in a specified time interval but with a quality no higher than the current HSV.

HDTV (High Definition Television)

Television format that is characterized by a new screen with 16:9 aspect ratio (the current is 4:3) and able to reproduce in greater detail (5 to 6 times more) than the existing broadcast systems is known as HDTV. HDTV should not be confused with variants of PAL widescreen (PALplus), NTSC or SECAM in which although the shape of the screen varies, quality improvement is small compared to the HDTV.

There are no agreements on the desired global HDTV standard. In Europe, they have chosen the 1250/50 system, by its simple relation to 625/50, while in the U.S. 1050/59.94 has been adopted by their relationship to 525/59.94. The only consensus so far is that the transmission for the links and outreach to the homes of viewers will be digital and compressed using MPEG-2. The E.E.U.U. have chosen the system developed by Grand Alliance advanced television.

ITU-R has two production standards based formats and these are 1125/60 and 1250/50.

ITU-R 601

This standard defines the encoding parameters of digital television for studios. It is the international standard for digital component video for both the system and 525 lines for 625 and is derived from the SMPTE RP125 and EBU Tech 3246-E. ITU-R 601 applies to both color difference signals (Y, RY, BY) and the RGB video, and defines sampling systems, matrix values of the RGB/Y, RY, BY and filtering features.

ITU-R 601 usually refers to digital component video color difference (instead of the RGB), for which defines 4:2:2 sampling at 13.5 MHz with 720 luminance samples per active line and scanning with 8 or 10 bits.

It accepts a small reserve below the black at level 16 and above the target level in the 235 to minimize distortion and noise distortion. Using a scan with 8 bits are possible about 16 million different colors: 28 each for Y (luminance), Cr and Cb (color difference signals digitized) = 224 = 16,777,216 possible combinations.

The sampling frequency of 13.5 MHz was chosen in order to offer a common sampling standard politically acceptable to the 525/60 and 625/50 systems, being a multiple of 2.25 MHz, the lowest common frequency that provides a static sampling pattern for both.

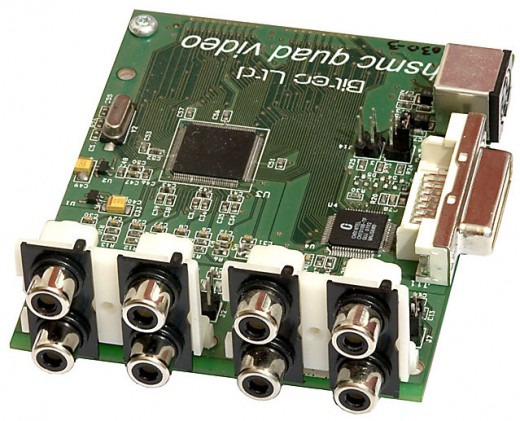

ITU-R 656

Interfaces for digital video signals into components in systems 525 and 625 TV lines. It sets the international standard for interconnecting digital television equipment operating according to the 4:2:2 standard defined in ITU-R 601, which comes from the rules SMPTE RP125 and EBU Tech 3246-E. Define the erasure signal, embedded sync words, the video multiplexing formats used by the serial and parallel interfaces, the electrical characteristics of the interface and the mechanical details of the connectors.

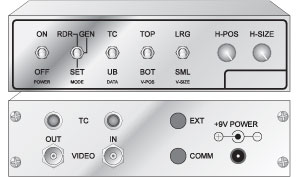

VITC

Time Code in the vertical interval. Digital Info timecode that is inserted into the vertical blanking interval of a TV signal. I can read the video heads on the tape at any time to submit images, even using the "jog" or stop the image, but not rewind or forward. Effective adjunct to LTC, ensuring that the timecode can be read at any time.

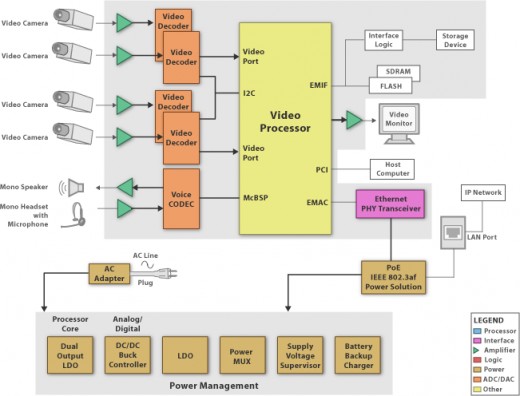

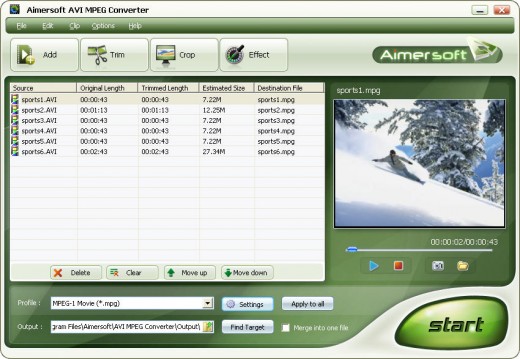

MPEG

Panel Moving Image (Moving Picture Experts Group) ISO/CCITT. MPEG deals with defining the rules for data compression of moving images. Their work continues that of JPEG, adding the inter-field compression, extra compression potentially available based on the similarities between successive frames of moving images. It was originally planned for a four-standard MPEG, but the inclusion of MPEG-2 HDTV has made MPEG-3 now redundant. MPEG-4 is used for various applications, but the main interest of the television industry focuses on MPEG-1 and MPEG-2.

MPEG-1

It is designed to operate at 1.2 Mbits/sec., The data rate of the CDROM, so you could play video with CD players. However, the quality is not enough to broadcast.

MPEG-2

It is designed to cover a whole range of needs, from "VHS quality" to HDTV, using different "profiles" algorithms and "levels" of image resolution. With data transfer speeds between 1.2 and 15 Mbits/sec., There is great interest in the use of MPEG-2 for digital transmission of television signals, including HDTV, application for which the system was conceived. The video coding is very complex, particularly because it is necessary that the decoding system in the reception is as simple and therefore cheap as possible.

MPEG compression can provide better images for high relationship to the JPEG compression, but with the complexity of decoding and in particular the codification and the groups of images of 12 frames (GOP), it is not an ideal compression system for editing. If you use a P or B frame, then even a court will require you to re-use MPEG encoding complex.

Of the five sections and four levels that generate a set of 20 possible combinations, 11 have already been implemented. The variations are so defined that it would be impractical to build a universal coder/decoder. Currently the focus is on the "main profile" (Main profile), "top level" (Main level), sometimes referred to as MP @ ML, which covers broadcast television formats up to 720 pixels x 576 lines at 30 frames/sec. These figures are considered the highest, and which also includes 720 x 486 at 30 frames and 720 x 576 25 frames. Since the purpose of coding is the transmission, 4:2:0 sampling is used, it is more economical.

A recent addition to MPEG-2 is the studio version. Designed for studio work and its sampling is 4:2:2. The configuration of study is called 422P @ ML. This is to improve image quality using higher data transfer rates. The first applications for this seem to be in the field of electronic news production (ENG) and some video servers.