Artificial Intelligence: The Singularity IS Quickly Approaching

Introduction

From 2001: A Space Odyssey to The Matrix , it is clear how artificial intelligence has significantly impacted pop culture and the imaginations of the scientific community. But Artificial Intelligence (AI) is not just a thing of fiction, it has become a reality. Technology has come a long way since 1956, when the term “Artificial Intelligence” was first created. From its meager beginnings, AI has quickly progressed in the recent years and is rapidly reaching the point of unlimited possibilities.

Artificial Intelligence is defined as a division of computer science pertaining to the simulation of intelligent behavior in computers and/or the capability of a machine to imitate intelligent human behavior (Merriam-webster dictionary , 2011, An Encyclopedia Britannica Company).In other words, AI is any program or invention which was made by using computers in order to perform tasks equivalent to aspects of human intellect. Some examples you may be familiar with are graphical user interfaces, electronic medical records and video games. There is much debate over the limitations of artificial intelligence. Many researchers and scientists feel this technology is close to reaching its thresholds and will never be able to mimic the human mind. Technology is growing at an exponential rate and AI development is still in its infancy. Findings suggest AI will eventually parallel and even surpass human intelligence. This paper will further discuss the components which will allow this technology to go above and beyond our expectations.

Body

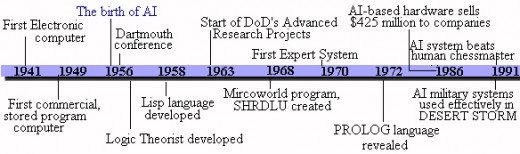

In order to understand the capabilities of artificial intelligence, the history and development must be considered. In 1941, the first electronic computer was invented, which revolutionized every aspect of storage and information processing. Without the computer, artificial intelligence would still be considered a thing of imagination. In the early 1950’s, an American mathematician, Norbert Wiener influenced the early development of artificial intelligence with his “Feedback Theory” (The history of AI, 2001, ThinkQuest Library). This theory basically defines the idea of machine intelligence. An example of this is the thermostat, which regulates temperature by taking the actual temperature of the house, comparing it to the desired temperature, and responding by turning the heat up or down. Considered by many to be the first AI program, The Logic Theorist was created in 1955. This program was the first to mimic the problem solving skills of a human being. In 1957, the first version of a new program, the General Problem Solver (GPS) was tested. The GPS was an extension of Wiener's feedback principle, and was capable of solving common sense problems. In 1958, John McCarthy, a computer and cognitive scientist, announced his new development; the LISP language, which is still used today. LISP stands for LISt Processing and was adopted as the language of choice among most AI developers. The 1960’s brought many programs, which could solve spatial, logic, algebra problems and even understand simple English sentences. These developments helped improved programs in language comprehension and logic. Expert systems were developed in the 1970’s. These applications predicted the probability of a solution under specific conditions. Some examples of these functions were forecasting the stock market and aiding miners in finding promising mineral locations. More advanced expert systems were developed in the 1980’s. Machine vision fields were also developed during this decade. These systems could differentiate between shapes in objects and was used for cameras and computers on assembly lines. The 1990’s brought AI-based technologies such as missile systems and heads-up-displays. Today, you can see artificial intelligence advancements in computer applications such as voice and character recognition and the use of fuzzy logic in the steadying of camcorders. It is exciting to imagine what technology has in store for the world in the future.

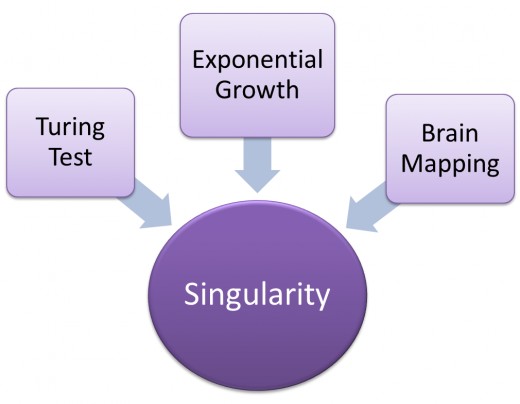

The idea that technology will soon equal or surpass human intelligence is a fairly recent concept. One of the leading figures bringing this new awareness to light is Futurist and Inventor, Ray Kurzweil. His discussions have demonstrated, in great depth, how we will achieve this major advancement. Kurzweil describes this outstanding accomplishment as the “Singularity”. He defines the Singularity as “… a future period during which the pace of technological change will be so fast and far-reaching that human existence on this planet will be irreversibly altered” (Kurzweil, R. 2006). The Singularity is going to occur. The passing of the Turing Test, exponential growth and reverse engineering of the brain may take place before we see the occurrence of the Singularity.

In order to achieve the Singularity, there are a few components which must be considered. First and foremost, technology must meet a certain criteria. One of the most important conditions to be measured is machine intelligence. If a machine can imitate human intelligence, then the machine itself must be intelligent. Alan Turing, a British mathematician, who was more commonly known for cracking the Nazi codes during World War II, was the man who introduced this simple proposition.

Turing proposed a test for machine intelligence in 1950. In this test, a human interrogator sits in a room opposite a teletype or computer terminal. A computer and a human being are hidden from the interrogator. The interrogator interviews both and tries to determine which is human and which is a computer. If the computer can fool the interrogator, it is deemed intelligent. This experiment would be known as “The Turing Test” or also known as “The Imitation Game”. “The Turing Test is sometimes used more generally to refer to some kinds of behavioral tests for the presence of mind, thought, or intelligence in putatively minded entities” (Oppy, G., & Dowe, D. 2011). In 1991, a man from New Jersey named Hugh Loebner established the Loebner Prize, which would award $100,000 to the first computer that could pass the Turing Test. To this day, no one has won the prize.

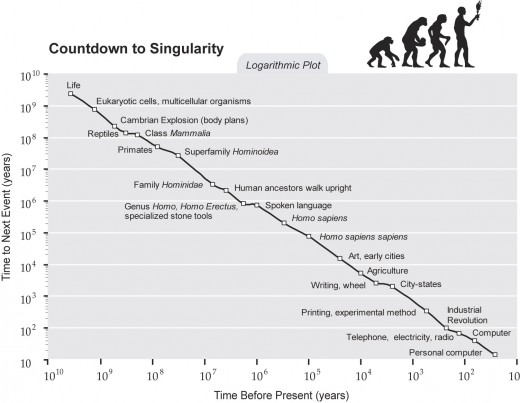

Ray Kurzweil suggests the Singularity is inevitable. He further describes the reason for this unavoidable achievement is due to “Exponential Growth”. Exponential growth starts out slowly and virtually unnoticeably, but beyond the curve it turns explosive and is profoundly transformative. Kurzweil states that we are doubling the paradigm shift rate for technology innovation every decade. In other words, the twentieth century was gradually speeding up to today's rate of progress; its achievements, therefore, were equivalent to about 20 years of progress. We'll make another "20 years" of progress in just 14 years, and then do the same again in only seven years. To express this in another way, we won't experience 100 years of technological advance in the twenty-first century; but 20,000 years of progress, or evolvement on a level of about 1,000 times greater than what was achieved in the twentieth century. Ray Kurzweil shows many examples of exponential growth with charts ranging from Mass Use of Inventions to Exponential Growth of Computing to clearly demonstrate the coming of the Singularity. He also calls this the Law of Accelerating Returns, the rate of progress of an evolutionary process increases exponentially over time (Kurzweil, R. 2001).

Reverse engineering of the human brain may help create a platform for the advancement in Artificial Intelligence, or even facilitate in the Singularity. The idea is if the brain is computable, then it can be imitated by the computer. In “Is the Brain a Digital Computer?”, John R. Searle further explores this notion. He states:

Granted that there is more to the mind than the syntactical operations of the digital computer; … it might be the case that mental states are at least computational and mental processes are computational processes operating over the formal structure of these mental states (para. 6).

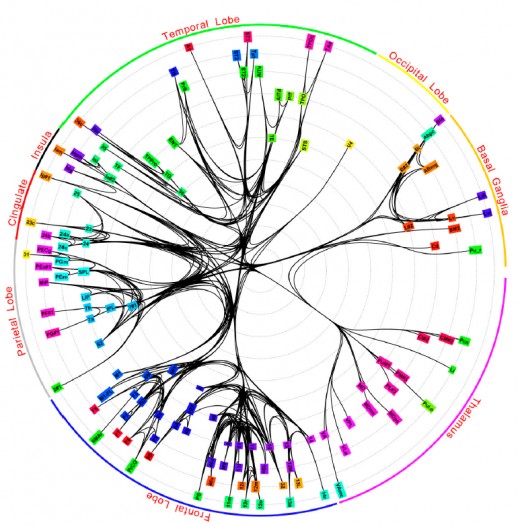

If scientists are able to map the millions of neurons of the brain, we may be able to understand how these neurons give rise to memory, personality and intelligence. Currently, new techniques are being explored on how to investigate the connectivity of the human brain. The Human Connectome Project is just one program presently in the works. This project concentrates on higher level, region-to-region connections (Trafton, A. 2010). The ultimate goal is to help scientists and researchers design the routing architecture for a network of cognitive computing. Most machine programs feature one processor executing a series of instructions in consecutive order, an architecture known as "serial processing." The brain, on the other hand, uses "parallel processing”. Parallel processing is an approach in which a problem is broken up into many pieces, each tackled separately by its own processor, after which the results are combined or integrated to get a single result. In theory, engineers could use parallel processing for machine programs. This might possibly be the next step towards the Singularity. If we are able to complete reverse engineering of the brain, it is quite conceivable we will be able to apply these neural connections into a computational algorithm, which will equal or even surpass human intelligence.

Conclusion

Today, we have seen much advancement in Artificial Intelligence, which brings us closer to the possibility of the Singularity. May 1997, Deep Blue, a chess-playing computer won a six-game match to world championGarry Kasparov. This was groundbreaking, as it showed the world that Artificial Intelligence was not Science Fiction, but an actual probability. Although, there was much controversy, as the computer’s human opponent made accusations of cheating. Of course, this left people wandering “Is IBM trying to pull a fast one on us?” The credibility of the program was questioned. Very recently an AI program, IBM’s Watson defeated human opponents on the popular trivia game show Jeopardy . Watson not only defeated one but two competitors, leaving the world baffled and bewildered. Can a computer be as smart as human? Is the Singularity closely upon us? The answers to these questions are a resounding yes. The IBM’s Watson may very well be the closest machine intelligence to passing the Turing Test yet. And it doesn’t seem too far from achieving this goal. Exponential growth clearly demonstrates the advancements in many fields of study, not to exclude the growth of computing. It further defines The Law of Accelerating Returns , which predicts 20,000 years of in the twenty-first century. Reverse engineering of the brain may also play a major role in the birth of the Singularity. If scientist and researchers are able to complete the neural networking of the human brain, algorithms may be used in computing to mimic human intelligence. It is an exciting time for technological advances. These three components may be exactly what are necessary to accomplish the Singularity. Are you ready for this?

References

Angelica, A. D. (2010, July 28). IBM scientists create most comprehensive map of the brain’s network. Retrieved February 14, 2011, from Kurzweil accelerating intelligence website

Copeland, J. (n.d.). Photo gallery. In The Turing archive for the history of computing. Retrieved February 22, 2011, from

http://www.alanturing.net/turing_archive/graphics/photos%20of%20Turing/pages/alan7_ psd.htm

Kurzweil, R. (2001, March 7). The law of accelerating returns. Retrieved February 24, 2011, from Kurzweil accelerating intelligence website

Oppy, G., & Dowe, D. (2011). The Turing test. Retrieved February 22, 2011, from Standford Encyclopedia of Philosophy website: http://plato.stanford.edu/entries/turing-test/

Ray Kurzweil. (2006). Reinventing Humanity: The Future of Machine-Human Intelligence. The Futurist, 40(2), 39-40,42-46. Retrieved February 22, 2011, from ABI/INFORM Global. (Document ID: 986756301).

Robot of the week. (n.d.). Retrieved February 19, 2011, from Robokingdom LLC website: http://www.roboticmagazine.com/robot_of_week.php

Searle, J. R. (n.d.). Strong AI, weak AI and cognitivism. In Is the brain a human computer?Retrieved February 27, 2011, from http://users.ecs.soton.ac.uk/harnad/Papers/Py104/searle.comp.html

The history of artificial intelligence. (n.d.). Retrieved February 19, 2011, from ThinkQuest: Library database.

Trafton, A. (2010, January 28). Mapping the brain. Retrieved February 27, 2011, from Massachusetts Institute of Technology website: http://web.mit.edu/newsoffice/2010/brain-mapping.html