planning a system migration

There is a tremendous amount of detail encompassing many different tiers of a system involved in a migration. Because of the complexity of the project, the greatest amount of effort goes into the planning stage – after that it’s simply a matter of implementation. Along with the logistics of the hardware, operating systems involved and the applications affected, one has to consider training of the users, getting the users involved from the beginning in the planning, and convincing management that this isn’t just an IT (information technology) daydream, but a real justifiable expense.

Migrations can be conversion from mainframe to network (or inclusion of network with mainframe), switching NOSs, switching protocols (SNA to TCP/IP, for instance), converting from obsolete repositories to relational databases, converting smokestack systems into a super-package like PeopleSoft, or combining individually or in-house developed applications into a global enterprise system – or any combination thereof. Management may get interested in a pitch from SAP, but it will be up to IT to determine the impact on the other tiers of the system, such as legacy data, outmoded hardware, and the process of migration itself.

When developing a plan, IT must consider whether to do conversions “manually”, code a program to do conversions, or use middleware (software designed to “bridge” two applications.) Should it be done by the in-house staff, or should “experts” be contracted? So many questions…. It’s a daunting task.

The cost for a migration can vary enough to make your head spin. If at all possible, do it in stages. Whatever the most-used applications are, they should be changed last.

The first step is to determine those legacy applications which would be last to move over (if at all). In most organizations, this would be mainframe data, since the operating system and data storage would need full revamping. So sales history and methods, human resources, student records at a college and accounting would be safe in the beginning. This also makes it easier for users to adapt – it’s difficult to interrupt these processes.

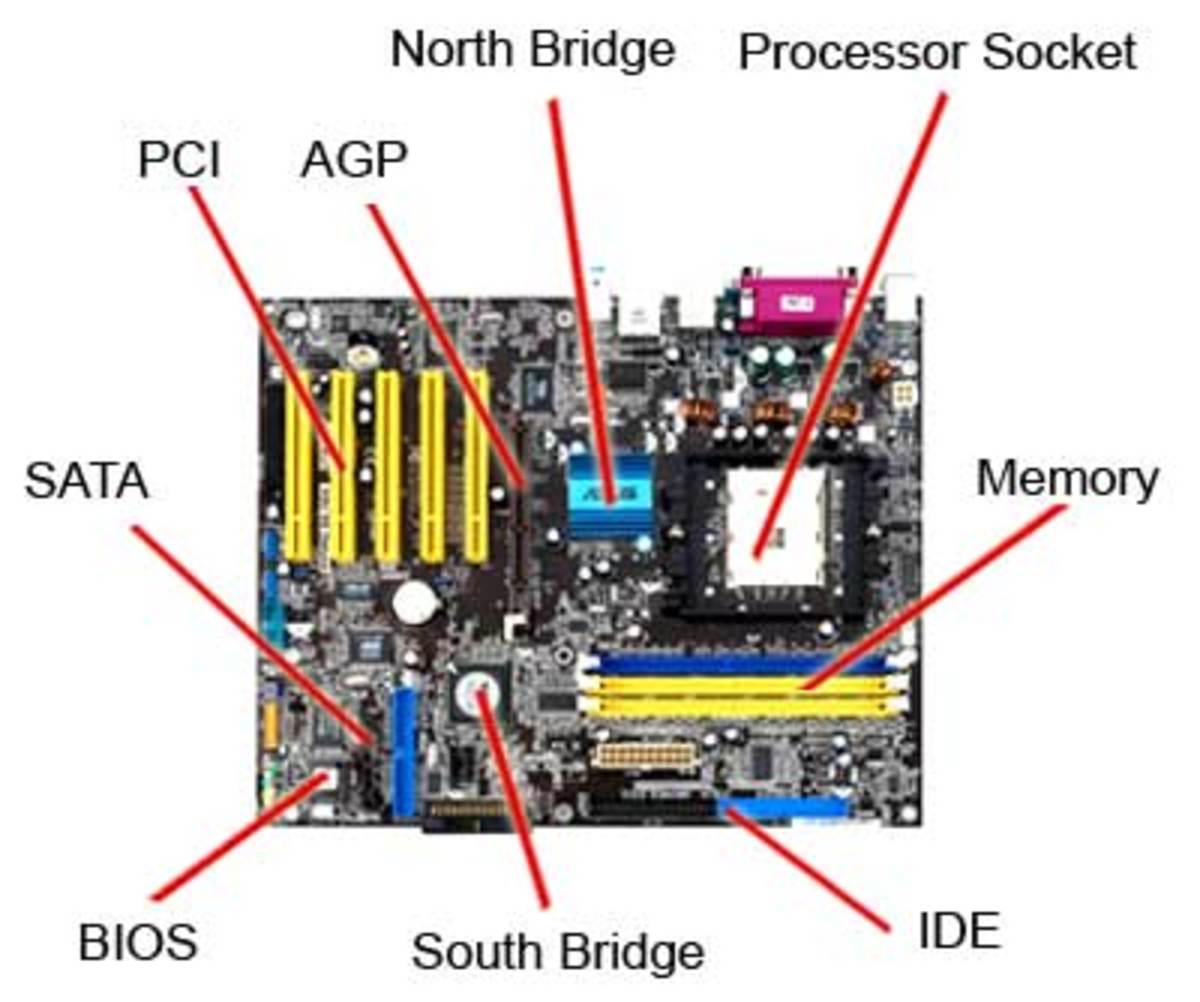

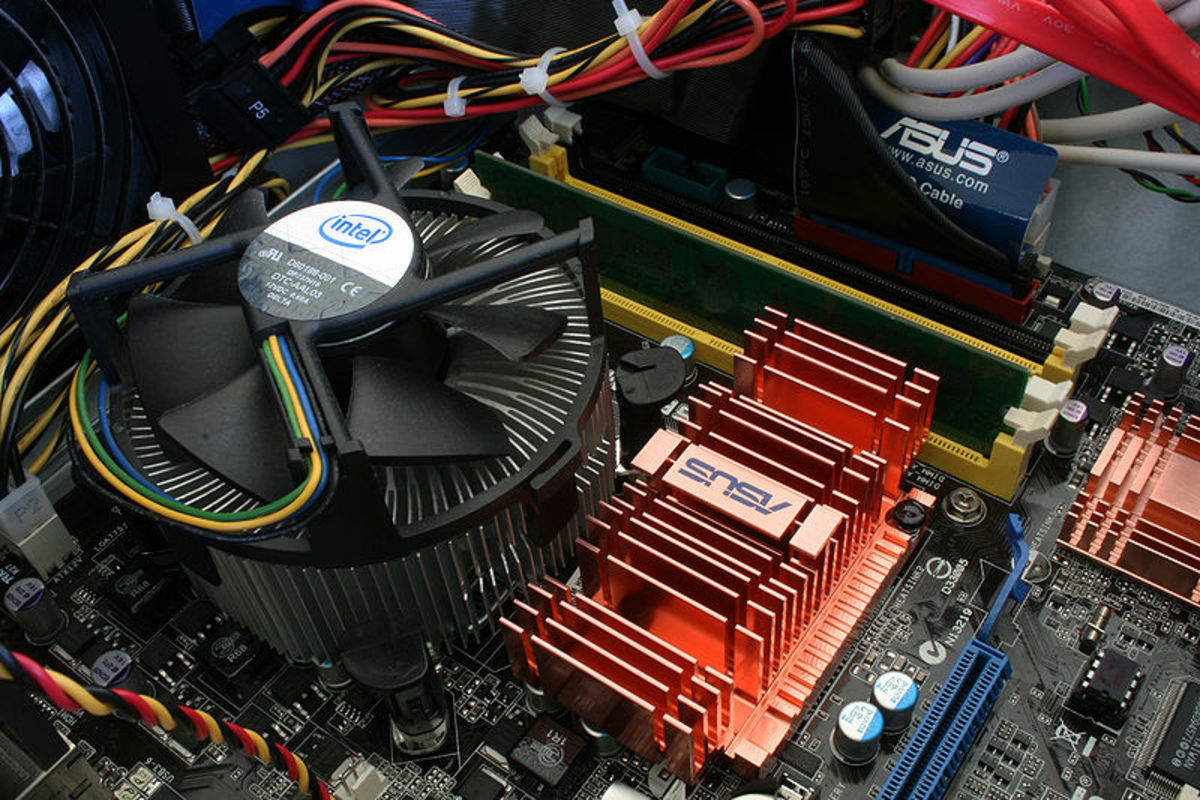

If the organization has a collection of networks, they should all be connected to a “master server” with a backup master mirroring it. Even a Novell network can be hooked in to a Windows (NY, 2000, etc.) master, as well as a Macintosh network.

Specialty applications should have a separate server. Databases can be kept on data servers, and Unix boxes can be connected to them all. By interrelating the networks and mainframe, you have built a Super Network. From here, networks at other sites will have a central entry point.

Look for middleware which can make some of these connections, especially ‘bridging’ one network to another. If middleware isn’t available, IT needs to determine if they have in-house programmers who can write the bridges or whether specialists should be contracted. If a bridge seems not to be feasible, the alternative may be to replace those applications with something newer which would be compatible with the enterprise system. A step like this is expensive, and might be considered for a separate step at a later time.

Once the hardware is blocked out and the connections planned, data dictionaries of all related databases need to be created if they are not already in place. A data dictionary names all the fields of a database and the attributes of these fields. By comparing this information, IT can determine how and when to convert data.

Even in the “simplest” migration, such as mainframe to network, there will need to be data conversion, since the information is actually packaged differently. So the very first thing to address is legacy data. One can always install a software application, but one can never replace historical information. And back it up first!